Motivation Custom “row-by-row” processing logic (sometimes called sequential User-Defined Functions) is prevalent in ETL workflows. The sequential nature of UDFs makes parallelization on GPUs tricky. This blog post covers how to implement the same UDF logic using RAPIDS to parallelize computation on GPUs and unlock 100x speedups. Introduction Typically, sequential UDFs revolve around records with … Continued

Motivation Custom “row-by-row” processing logic (sometimes called sequential User-Defined Functions) is prevalent in ETL workflows. The sequential nature of UDFs makes parallelization on GPUs tricky. This blog post covers how to implement the same UDF logic using RAPIDS to parallelize computation on GPUs and unlock 100x speedups. Introduction Typically, sequential UDFs revolve around records with … Continued

Motivation

Custom “row-by-row” processing logic (sometimes called sequential User-Defined Functions) is prevalent in ETL workflows. The sequential nature of UDFs makes parallelization on GPUs tricky. This blog post covers how to implement the same UDF logic using RAPIDS to parallelize computation on GPUs and unlock 100x speedups.

Introduction

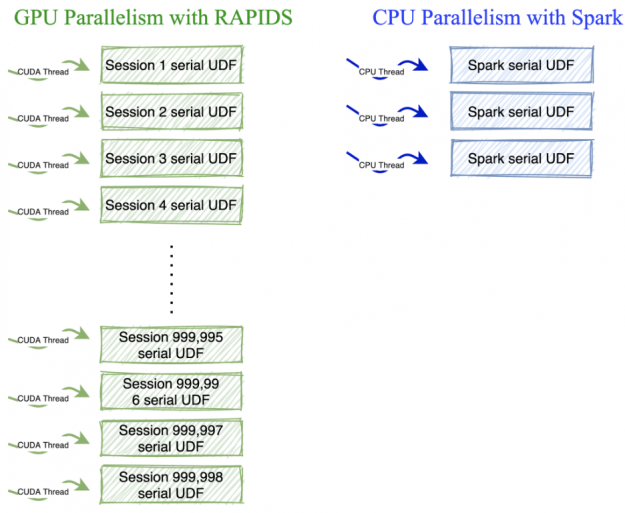

Typically, sequential UDFs revolve around records with the same grouping key. For example, analyzing a user’s clicks per session on a website where a session ends after 30 minutes of inactivity. In Apache Spark, you’d first cluster on user key, and then segregate user sessions using a UDF like in the example below. This UDF uses multiple CPU threads to run sequential functions in parallel.

Running on the GPU with Numba

Dask-CUDA clusters use a single process and thread per GPU, so running a single sequential function won’t work for this model. To run serial functions on the hundreds of CUDA cores available in GPUs, you can take advantage of the following properties:

- The function can be applied independently across keys.

- The grouping key has high cardinality (there are a high number of unique keys)

Let’s look at how you can use Numba to transform our sessionization UDF for use on GPUs. Let’s break the algorithm into two parts.

Part 1. Session change flag

With this function, we create session boundaries. This function is embarrassingly parallel so that we can launch threads for each row of the dataframe.

Part 2. Serial logic on a session-level

For session analysis, we have to set a unique session flag across all the rows that belong to that session for each user. This function is serial at the session level, but as we have many sessions (approx 18 M) in each dataframe, we can take advantage of GPU parallelism by launching threads for each session.

Using these functions with dask-cuDF Dataframe

We invoke the above functions on each partition of a dask-cudf dataframe using a map_partition call. The main logic of the code above is still similar to pure python while providing a lot of speedups. A similar serial function in pure python takes about `17.7s` vs. `14.3 ms` on GPUs. That’s a whopping 100x speedup.

Conclusion

When working with serial user-defined functions that operate on many small groups, transforming the UDF using numba, as shown above, will maximize performance on GPUs. Thus, with the power of numba, Dask, and RAPIDS, you can now supercharge the map-reduce UDF ETL jobs to run at the speed of light.

Want to start using Dask and RAPIDS to analyze big data at the speed of light? Check out the RAPIDS Getting Started webpage, with links to help you download pre-built Docker containers or install directly via Conda.