NVIDIA announces new SDKs available in the NGC catalog, a hub of GPU-optimized deep learning, machine learning, and HPC applications. With highly performant…

NVIDIA announces new SDKs available in the NGC catalog, a hub of GPU-optimized deep learning, machine learning, and HPC applications. With highly performant…

NVIDIA announces new SDKs available in the NGC catalog, a hub of GPU-optimized deep learning, machine learning, and HPC applications. With highly performant software containers, pretrained models, industry-specific SDKs, and Jupyter notebooks available, AI developers and data scientists can simplify and reduce complexities in their end-to-end workflows.

This post provides an overview of new and updated services in the NGC catalog, along with the latest advanced SDKs to help you streamline workflows and build solutions faster.

Simplifying access to large language models

Recent advances in large language models (LLMs) have fueled state-of-the-art performance for NLP applications, such as virtual scribes in healthcare, interactive virtual assistants, and many more.

NVIDIA NeMo Megatron

NVIDIA NeMo Megatron, an end-to-end framework for training and deploying LLMs with up to trillions of parameters, is now available in open beta from the NGC catalog. It consists of an end-to-end workflow for automated distributed data processing; training large-scale customized GPT-3, T5, and multilingual T5 (mT5) models; and deploying models for inference at scale.

NeMo Megatron can be deployed on several cloud platforms, including Microsoft Azure, Amazon Web Services, and Oracle Cloud Infrastructure. It can also be accessed through NVIDIA DGX SuperPODs and NVIDIA DGX Foundry.

Request NeMo Megatron in open beta.

NVIDIA NeMo LLM

The NVIDIA NeMo LLM service provides the fastest path to customize foundation LLMs and deploy them at scale, using the NVIDIA-managed cloud API or through private and public clouds.

NVIDIA and community-built foundation models can be customized using prompt learning capabilities, which are compute-efficient techniques that embed context in user queries to enable greater accuracy in specific use cases. These techniques require just a few hundred samples to achieve high accuracy in building applications. These applications can range from text summarization and paraphrasing to story generation.

This service also provides access to the Megatron 530B model, one of the world’s largest LLMs with 530 billion parameters. Additional model checkpoints include 3B T5 and NVIDIA-trained 5B and 20B GPT-3.

Apply now for NeMo LLM early access.

NVIDIA BioNeMo

The NVIDIA BioNeMo service is a unified cloud environment for end-to-end, AI-based drug discovery workflows, without the need for IT infrastructure.

Today, the BioNeMo service includes two protein models, with models for DNA, RNA, generative chemistry, and other biology and chemistry models coming soon.

ESM-1 is a protein LLM, which was trained on 52 million protein sequences, and can be used to help drug discovery researchers understand protein properties, such as cellular location or solubility, and secondary structures, such as alpha helix or beta sheet.

The second protein model in the BioNeMo service is OpenFold, a PyTorch-based NVIDIA-optimized reproduction of AlphaFold2 that quickly predicts the 3D structure of a protein from its primary amino acid sequence.

With the BioNeMo service, chemists, biologists, and AI drug discovery researchers can generate novel therapeutics and understand the properties and function of proteins and DNA. Ultimately, they can combine many AI models in a connected, large-scale, in silico AI workflow that requires supercomputing scale over multiple GPUs.

BioNeMo will enable end-to-end modular drug discovery to accelerate research and better understand proteins, DNA, and chemicals.

Apply now for BioNeMo early access.

AI frameworks for 3D and digital twin workflows

A digital twin is a virtual representation—a true-to-reality simulation of physics and materials—of a real-world physical asset or system, which is continuously updated. Digital twins aren’t just for inanimate objects and people. They can replicate a fulfillment center process to test out human-robot interactions before activating certain robot functions in live environments and the applications are as wide as the imagination.

NVIDIA Omniverse Replicator

NVIDIA Omniverse Replicator is a highly extensible framework built on the NVIDIA Omniverse platform that enables physically accurate 3D synthetic data generation to accelerate the training and accuracy of perception networks.

Technical artists, software developers, and ML engineers can now easily build custom, physically accurate, synthetic data generation pipelines in the cloud or on-premises with the Omniverse Replicator container available from the NGC catalog.

Download the Omniverse Replicator container for self-service cloud deployment.

NVIDIA Modulus

NVIDIA Modulus is a neural network AI framework that enables you to create customizable training pipelines for digital twins, climate models, and physics-based modeling and simulation.

Modulus is integrated with NVIDIA Omniverse so that you can visualize the outputs of Modulus-trained models. This interface enables interactive exploration of design variables and parameters for inferring new system behavior and visualizing it in near real time.

The latest release (v22.09), includes key enhancements to increase composition flexibility for neural operator architectures, features to improve training convergence and performance, and most importantly, significant improvements to the user experience and documentation.

Download the latest version of Modulus.

Deep learning software

The most popular deep learning frameworks for training and inference are updated monthly. Pull the latest version (v22.09):

New pretrained models

We are constantly adding state-of-the-art models for a variety of speech and vision models. The following pretrained models are new on NGC:

- SLU Conformer-Transformer-Large SLURP: Performs joint intent classification and slot filling, directly from audio input.

- Riva ASR Korean LM: An automatic speech recognition (ASR) engine that can optionally condition the transcript output on n-gram language models.

- LangID Ambernet: Used for spoken language identification (LangID or LID) and serves as the first step for ASR.

- STT En Squeezeformer CTC Small Librispeech: A model for English ASR that is trained with NeMo on the LibriSpeech dataset.

- TTS De FastPitch HiFi-GAN: This collection contains two models: FastPitch, which was trained on over 23 hours of German speech from one speaker, and HiFi-GAN, which was trained on mel spectrograms produced by the FastPitch model.

Explore more pretrained models for common AI tasks on the NGC Models page.

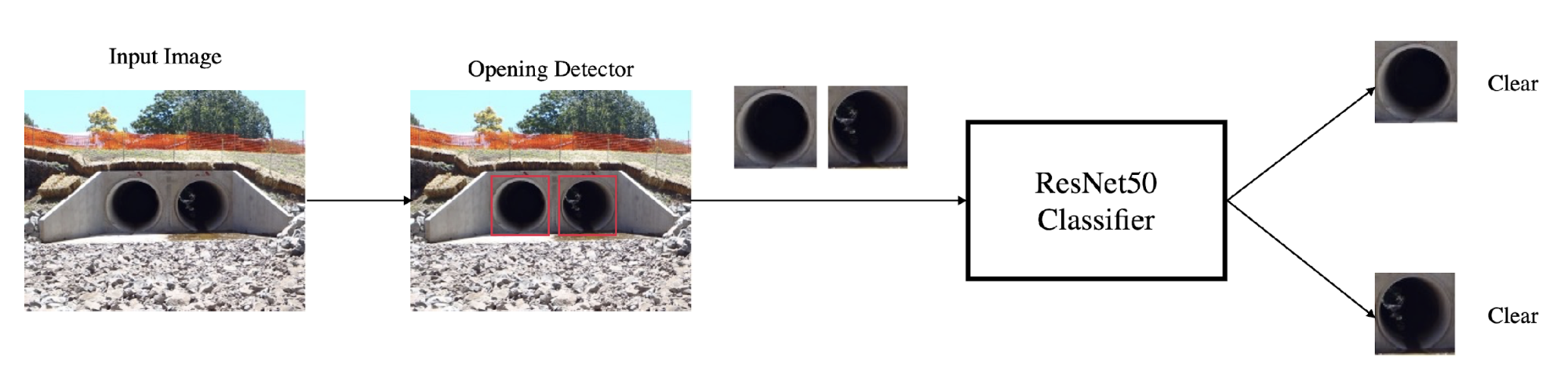

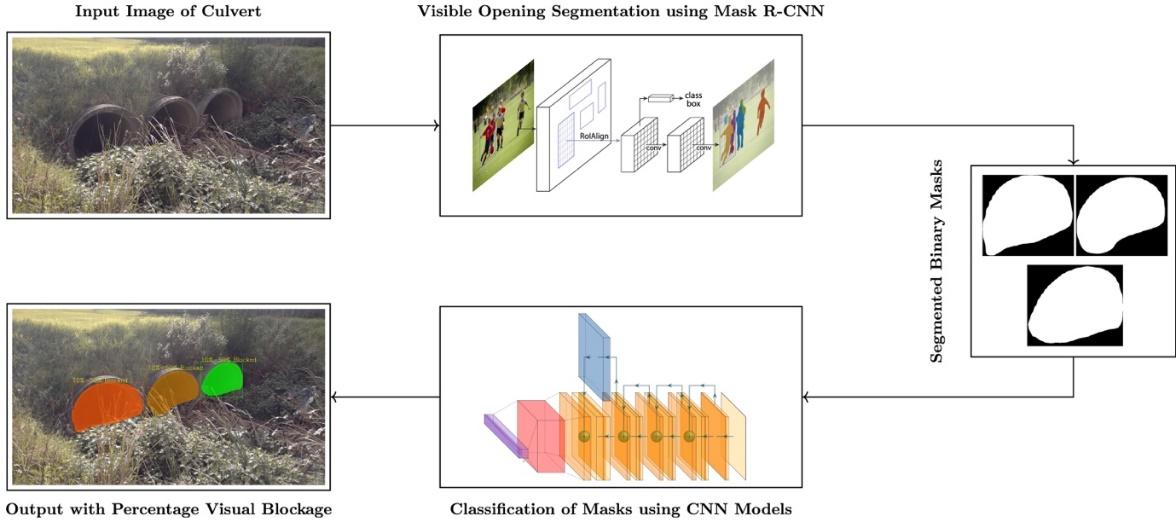

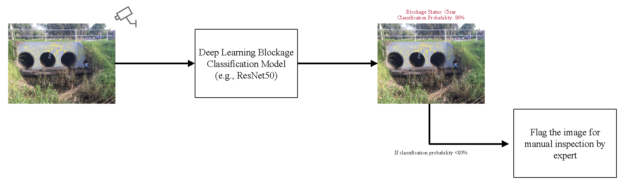

One of the key contributors in originating flash floods is the blockage of cross-drainage hydraulic structures, such as culverts, by unwanted, flood-borne…

One of the key contributors in originating flash floods is the blockage of cross-drainage hydraulic structures, such as culverts, by unwanted, flood-borne…

Learn how to leverage the latest NVIDIA RTX technology in Unity Engine and connect with experts during a live Q&A at this webinar on November 16.

Learn how to leverage the latest NVIDIA RTX technology in Unity Engine and connect with experts during a live Q&A at this webinar on November 16.