We live in a world of great natural beauty — of majestic mountains, dramatic seascapes, and serene forests. Imagine seeing this beauty as a bird does, flying past richly detailed, three-dimensional landscapes. Can computers learn to synthesize this kind of visual experience? Such a capability would allow for new kinds of content for games and virtual reality experiences: for instance, relaxing within an immersive flythrough of an infinite nature scene. But existing methods that synthesize new views from images tend to allow for only limited camera motion.

In a research effort we call Infinite Nature, we show that computers can learn to generate such rich 3D experiences simply by viewing nature videos and photographs. Our latest work on this theme, InfiniteNature-Zero (presented at ECCV 2022) can produce high-resolution, high-quality flythroughs starting from a single seed image, using a system trained only on still photographs, a breakthrough capability not seen before. We call the underlying research problem perpetual view generation: given a single input view of a scene, how can we synthesize a photorealistic set of output views corresponding to an arbitrarily long, user-controlled 3D path through that scene? Perpetual view generation is very challenging because the system must generate new content on the other side of large landmarks (e.g., mountains), and render that new content with high realism and in high resolution.

| Example flythrough generated with InfiniteNature-Zero. It takes a single input image of a natural scene and synthesizes a long camera path flying into that scene, generating new scene content as it goes. |

Background: Learning 3D Flythroughs from Videos

To establish the basics of how such a system could work, we’ll describe our first version, “Infinite Nature: Perpetual View Generation of Natural Scenes from a Single Image” (presented at ICCV 2021). In that work we explored a “learn from video” approach, where we collected a set of online videos captured from drones flying along coastlines, with the idea that we could learn to synthesize new flythroughs that resemble these real videos. This set of online videos is called the Aerial Coastline Imagery Dataset (ACID). In order to learn how to synthesize scenes that respond dynamically to any desired 3D camera path, however, we couldn’t simply treat these videos as raw collections of pixels; we also had to compute their underlying 3D geometry, including the camera position at each frame.

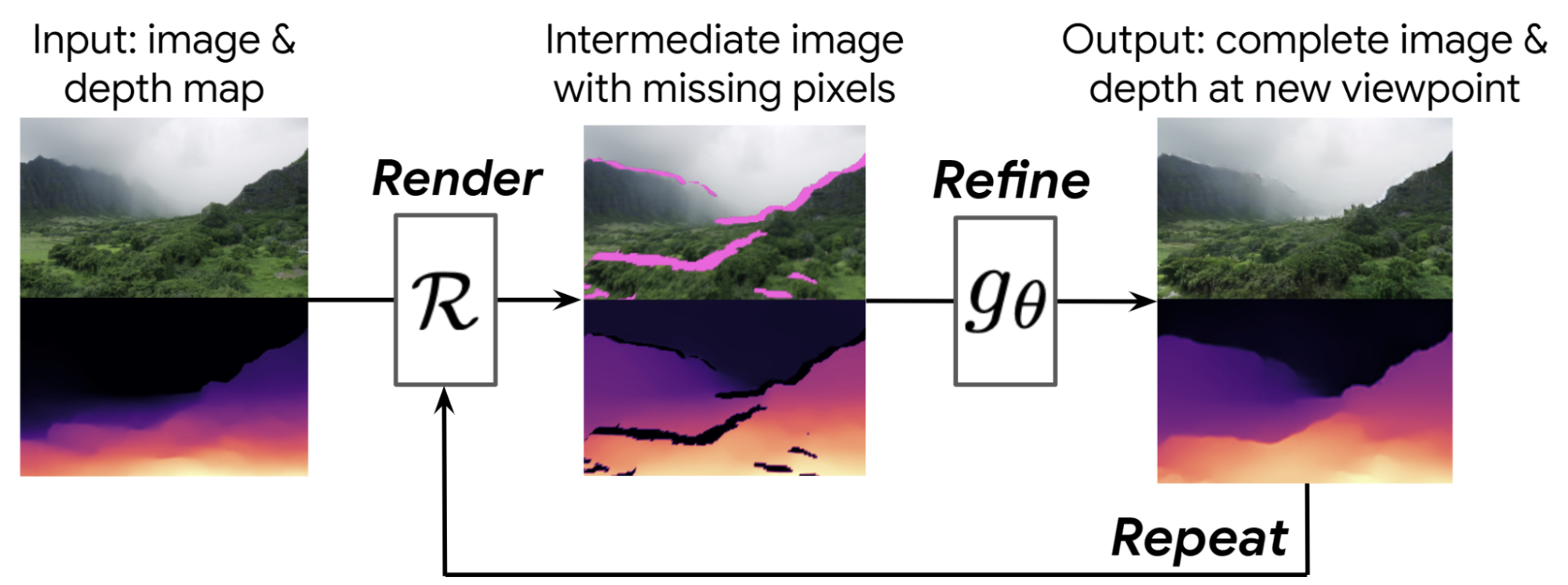

The basic idea is that we learn to generate flythroughs step-by-step. Given a starting view, like the first image in the figure below, we first compute a depth map using single-image depth prediction methods. We then use that depth map to render the image forward to a new camera viewpoint, shown in the middle, resulting in a new image and depth map from that new viewpoint.

However, this intermediate image has some problems — it has holes where we can see behind objects into regions that weren’t visible in the starting image. It is also blurry, because we are now closer to objects, but are stretching the pixels from the previous frame to render these now-larger objects.

To handle these problems, we learn a neural image refinement network that takes this low-quality intermediate image and outputs a complete, high-quality image and corresponding depth map. These steps can then be repeated, with this synthesized image as the new starting point. Because we refine both the image and the depth map, this process can be iterated as many times as desired — the system automatically learns to generate new scenery, like mountains, islands, and oceans, as the camera moves further into the scene.

We train this render-refine-repeat synthesis approach using the ACID dataset. In particular, we sample a video from the dataset and then a frame from that video. We then use this method to render several new views moving into the scene along the same camera trajectory as the ground truth video, as shown in the figure below, and compare these rendered frames to the corresponding ground truth video frames to derive a training signal. We also include an adversarial setup that tries to distinguish synthesized frames from real images, encouraging the generated imagery to appear more realistic.

The resulting system can generate compelling flythroughs, as featured on the project webpage, along with a “flight simulator” Colab demo. Unlike prior methods on video synthesis, this method allows the user to interactively control the camera and can generate much longer camera paths.

InfiniteNature-Zero: Learning Flythroughs from Still Photos

One problem with this first approach is that video is difficult to work with as training data. High-quality video with the right kind of camera motion is challenging to find, and the aesthetic quality of an individual video frame generally cannot compare to that of an intentionally captured nature photograph. Therefore, in “InfiniteNature-Zero: Learning Perpetual View Generation of Natural Scenes from Single Images”, we build on the render-refine-repeat strategy above, but devise a way to learn perpetual view synthesis from collections of still photos — no videos needed. We call this method InfiniteNature-Zero because it learns from “zero” videos. At first, this might seem like an impossible task — how can we train a model to generate video flythroughs of scenes when all it’s ever seen are isolated photos?

To solve this problem, we had the key insight that if we take an image and render a camera path that forms a cycle — that is, where the path loops back such that the last image is from the same viewpoint as the first — then we know that the last synthesized image along this path should be the same as the input image. Such cycle consistency provides a training constraint that helps the model learn to fill in missing regions and increase image resolution during each step of view generation.

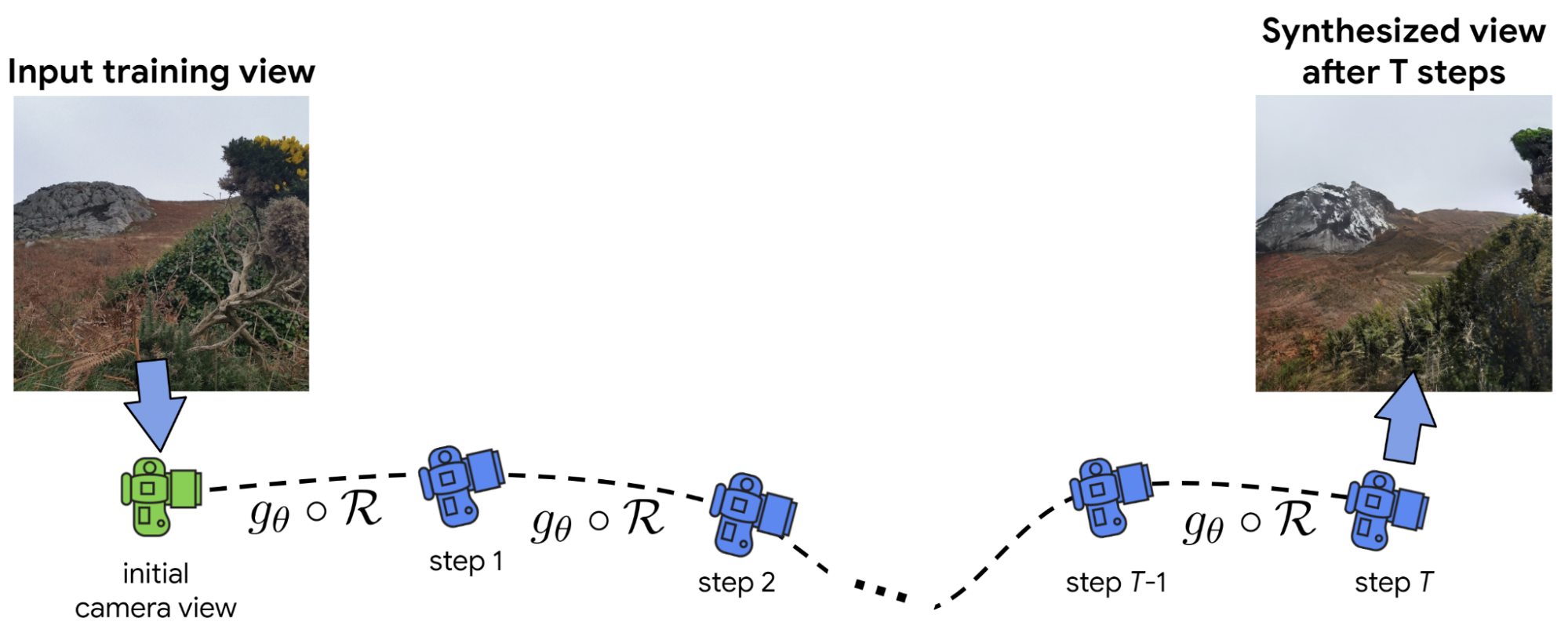

However, training with these camera cycles is insufficient for generating long and stable view sequences, so as in our original work, we include an adversarial strategy that considers long, non-cyclic camera paths, like the one shown in the figure above. In particular, if we render T frames from a starting frame, we optimize our render-refine-repeat model such that a discriminator network can’t tell which was the starting frame and which was the final synthesized frame. Finally, we add a component trained to generate high-quality sky regions to increase the perceived realism of the results.

With these insights, we trained InfiniteNature-Zero on collections of landscape photos, which are available in large quantities online. Several resulting videos are shown below — these demonstrate beautiful, diverse natural scenery that can be explored along arbitrarily long camera paths. Compared to our prior work — and to prior video synthesis methods — these results exhibit significant improvements in quality and diversity of content (details available in the paper).

| Several nature flythroughs generated by InfiniteNature-Zero from single starting photos. |

Conclusion

There are a number of exciting future directions for this work. For instance, our methods currently synthesize scene content based only on the previous frame and its depth map; there is no persistent underlying 3D representation. Our work points towards future algorithms that can generate complete, photorealistic, and consistent 3D worlds.

Acknowledgements

Infinite Nature and InfiniteNature-Zero are the result of a collaboration between researchers at Google Research, UC Berkeley, and Cornell University. The key contributors to the work represented in this post include Angjoo Kanazawa, Andrew Liu, Richard Tucker, Zhengqi Li, Noah Snavely, Qianqian Wang, Varun Jampani, and Ameesh Makadia.