At CES 2025, NVIDIA announced key updates to NVIDIA Isaac, a platform of accelerated libraries, application frameworks, and AI models that accelerate the…

At CES 2025, NVIDIA announced key updates to NVIDIA Isaac, a platform of accelerated libraries, application frameworks, and AI models that accelerate the…

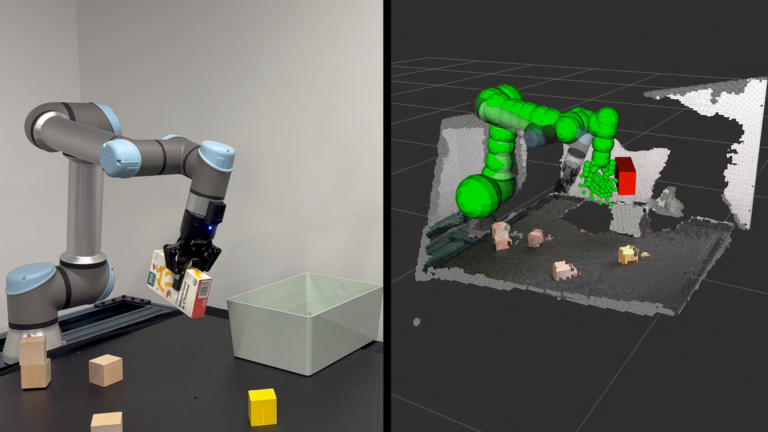

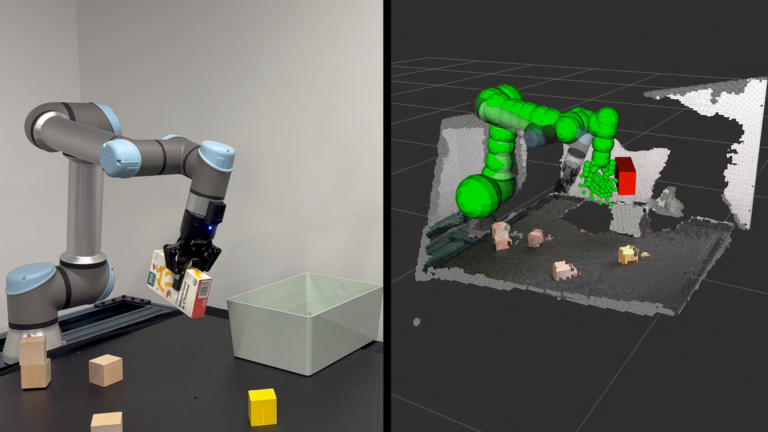

At CES 2025, NVIDIA announced key updates to NVIDIA Isaac, a platform of accelerated libraries, application frameworks, and AI models that accelerate the development of AI robots. NVIDIA Isaac streamlines the development of robotic systems from simulation to real-world deployment. In this post, we discuss all the new advances in NVIDIA Isaac: NVIDIA Isaac Sim is a reference…

Powered by the new GB10 Grace Blackwell Superchip, Project DIGITS can tackle large generative AI models of up to 200B parameters.

Powered by the new GB10 Grace Blackwell Superchip, Project DIGITS can tackle large generative AI models of up to 200B parameters. Spatial computing experiences are transforming how we interact with data, connecting the physical and digital worlds through technologies like extended reality…

Spatial computing experiences are transforming how we interact with data, connecting the physical and digital worlds through technologies like extended reality…