NVIDIA announced today its acceleration of Microsoft’s new Phi-3 Mini open language model with NVIDIA TensorRT-LLM, an open-source library for optimizing large language model inference when running on NVIDIA GPUs from PC to cloud. Phi-3 Mini packs the capability of 10x larger models and is licensed for both research and broad commercial usage, advancing Phi-2

Read Article

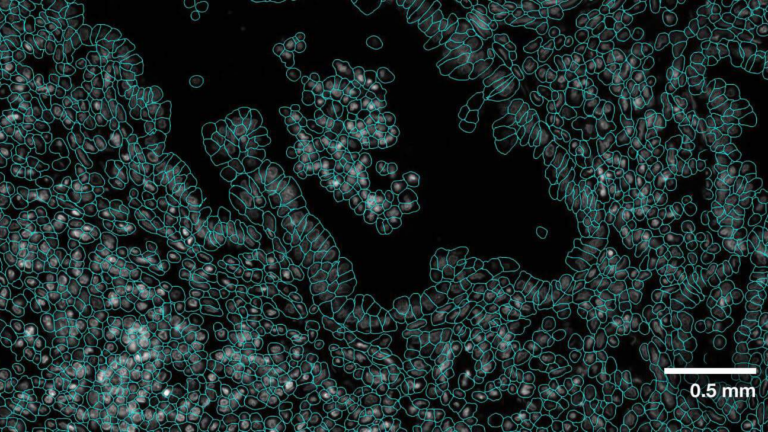

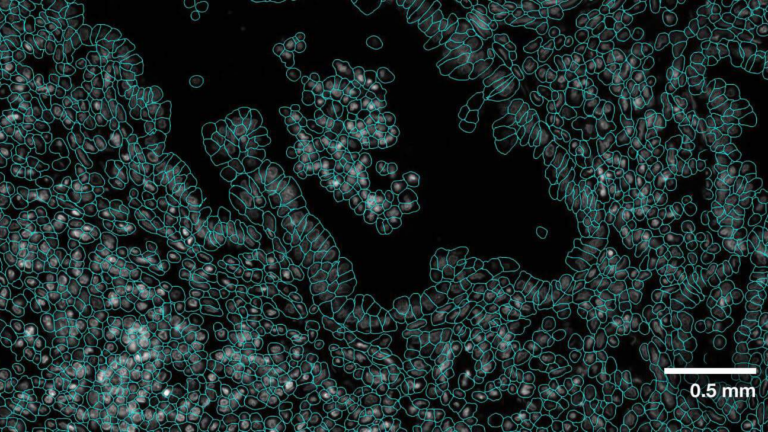

Genomics researchers use different sequencing techniques to better understand biological systems, including single-cell and spatial omics. Unlike single-cell,…

Genomics researchers use different sequencing techniques to better understand biological systems, including single-cell and spatial omics. Unlike single-cell,…

Genomics researchers use different sequencing techniques to better understand biological systems, including single-cell and spatial omics. Unlike single-cell, which looks at data at the cellular level, spatial omics considers where that data is located and takes into account the spatial context for analysis. As genomics researchers look to model biological systems across multiple omics at…

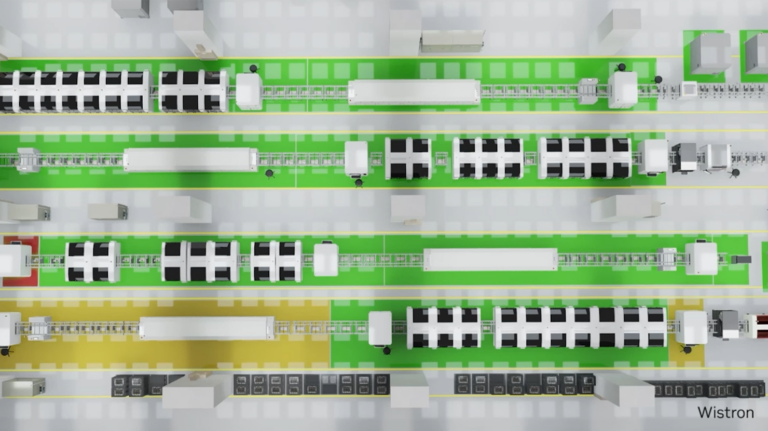

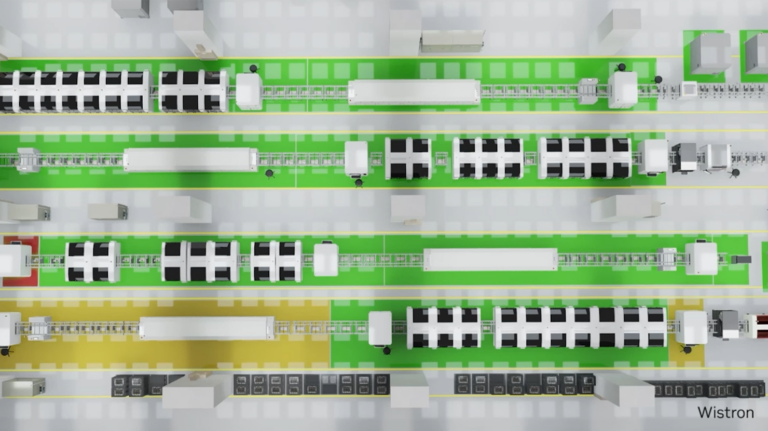

With NVIDIA AI, NVIDIA Omniverse, and the Universal Scene Description (OpenUSD) ecosystem, industrial developers are building virtual factory solutions that…

With NVIDIA AI, NVIDIA Omniverse, and the Universal Scene Description (OpenUSD) ecosystem, industrial developers are building virtual factory solutions that…

With NVIDIA AI, NVIDIA Omniverse, and the Universal Scene Description (OpenUSD) ecosystem, industrial developers are building virtual factory solutions that accelerate time-to-market, maximize production capacity, and cut costs through optimized processes for both brownfield and greenfield developments. The journey to building a full-scale digital twin of a factory is challenging.

This week’s model release features two new NVIDIA AI Foundation models, Mistral Large and Mixtral 8x22B, both developed by Mistral AI. These cutting-edge…

This week’s model release features two new NVIDIA AI Foundation models, Mistral Large and Mixtral 8x22B, both developed by Mistral AI. These cutting-edge…

This week’s model release features two new NVIDIA AI Foundation models, Mistral Large and Mixtral 8x22B, both developed by Mistral AI. These cutting-edge text-generation AI models are supported by NVIDIA NIM microservices, which provide prebuilt containers powered by NVIDIA inference software that enable developers to reduce deployment times from weeks to minutes. Both models are available through…

Just Released: NVIDIA Modulus v24.04

Modulus v24.04 delivers an optimized CorrDiff model and Earth2Studio for exploring weather AI models.

Modulus v24.04 delivers an optimized CorrDiff model and Earth2Studio for exploring weather AI models.

Modulus v24.04 delivers an optimized CorrDiff model and Earth2Studio for exploring weather AI models.

We’re excited to announce support for the Meta Llama 3 family of models in NVIDIA TensorRT-LLM, accelerating and optimizing your LLM inference performance. You…

We’re excited to announce support for the Meta Llama 3 family of models in NVIDIA TensorRT-LLM, accelerating and optimizing your LLM inference performance. You…

We’re excited to announce support for the Meta Llama 3 family of models in NVIDIA TensorRT-LLM, accelerating and optimizing your LLM inference performance. You can immediately try Llama 3 8B and Llama 3 70B—the first models in the series—through a browser user interface. Or, through API endpoints running on a fully accelerated NVIDIA stack from the NVIDIA API catalog, where Llama 3 is packaged as…

Whether they’re monitoring miniscule insects or delivering insights from satellites in space, NVIDIA-accelerated startups are making every day Earth Day. Sustainable Futures, an initiative within the NVIDIA Inception program for cutting-edge startups, is supporting 750+ companies globally focused on agriculture, carbon capture, clean energy, climate and weather, environmental analysis, green computing, sustainable infrastructure and waste

Read Article

Aerial CUDA-Accelerated radio access network (RAN) enables acceleration of telco workloads, delivering new levels of spectral efficiency (SE) on a cloud-native…

Aerial CUDA-Accelerated radio access network (RAN) enables acceleration of telco workloads, delivering new levels of spectral efficiency (SE) on a cloud-native…

Aerial CUDA-Accelerated radio access network (RAN) enables acceleration of telco workloads, delivering new levels of spectral efficiency (SE) on a cloud-native accelerated computing platform, using CPU, GPU, and DPU. NVIDIA MGX GH200 for Aerial is built on the state-of-the-art NVIDIA Grace Hopper Superchips and NVIDIA Bluefield-3 DPUs. It is designed to accelerate 5G wireless networks end-to…

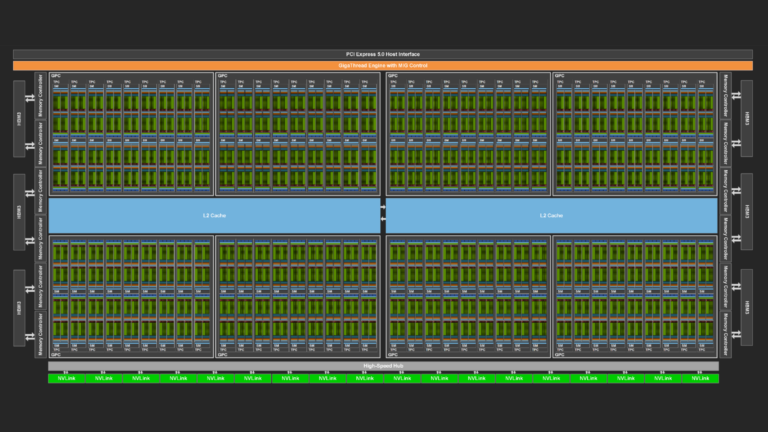

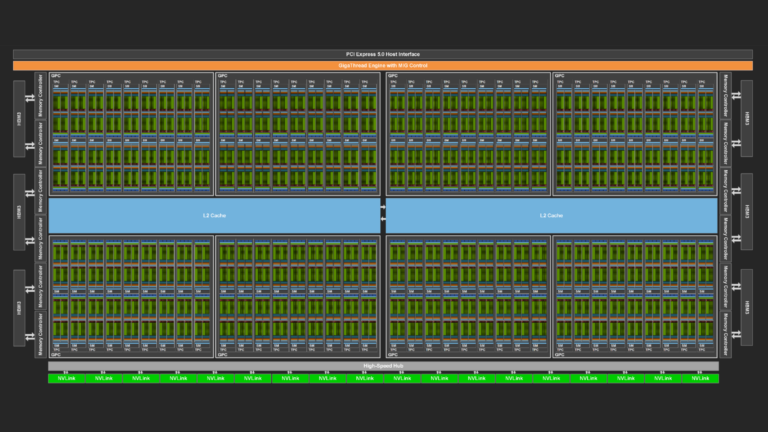

NVIDIA GPUs are becoming increasingly powerful with each new generation. This increase generally comes in two forms. Each streaming multi-processor (SM), the…

NVIDIA GPUs are becoming increasingly powerful with each new generation. This increase generally comes in two forms. Each streaming multi-processor (SM), the…

NVIDIA GPUs are becoming increasingly powerful with each new generation. This increase generally comes in two forms. Each streaming multi-processor (SM), the workhorse of the GPU, can execute instructions faster and faster, and the memory system can deliver data to the SMs at an ever-increasing pace. At the same time, the number of SMs also typically increases with each generation…

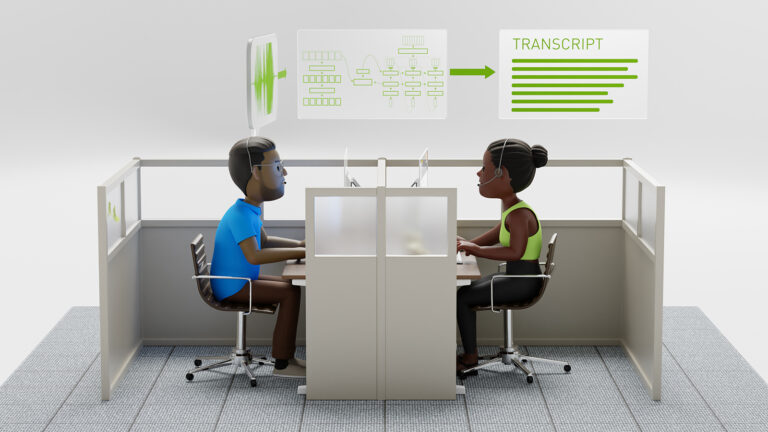

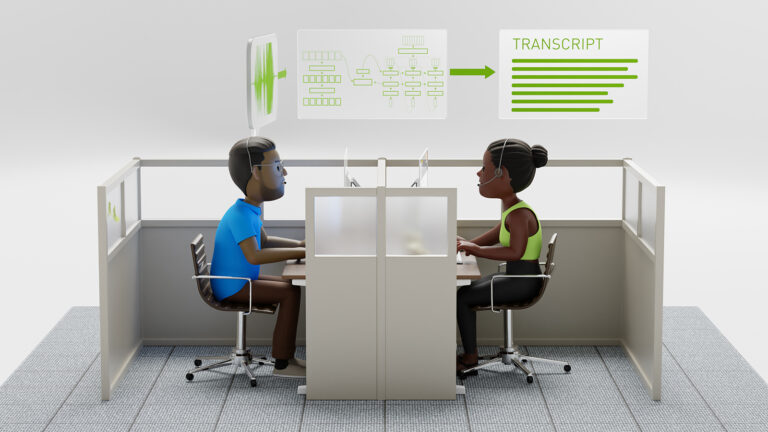

NVIDIA NeMo, an end-to-end platform for the development of multimodal generative AI models at scale anywhere—on any cloud and on-premises—released the…

NVIDIA NeMo, an end-to-end platform for the development of multimodal generative AI models at scale anywhere—on any cloud and on-premises—released the…

NVIDIA NeMo, an end-to-end platform for the development of multimodal generative AI models at scale anywhere—on any cloud and on-premises—released the Parakeet family of automatic speech recognition (ASR) models. These state-of-the-art ASR models, developed in collaboration with Suno.ai, transcribe spoken English with exceptional accuracy. This post details Parakeet ASR models that are…