The latest embedding model from NVIDIA—NV-Embed—set a new record for embedding accuracy with a score of 69.32 on the Massive Text Embedding Benchmark…

The latest embedding model from NVIDIA—NV-Embed—set a new record for embedding accuracy with a score of 69.32 on the Massive Text Embedding Benchmark…

The latest embedding model from NVIDIA—NV-Embed—set a new record for embedding accuracy with a score of 69.32 on the Massive Text Embedding Benchmark (MTEB), which covers 56 embedding tasks. Highly accurate and effective models like NV-Embed are key to transforming vast amounts of data into actionable insights. NVIDIA provides top-performing models through the NVIDIA API catalog.

Generative AI enables users to quickly generate new content based on a variety of inputs. Inputs and outputs to these models can include text, images, sounds,…

Generative AI enables users to quickly generate new content based on a variety of inputs. Inputs and outputs to these models can include text, images, sounds,… The latest state-of-the-art foundation large language models (LLMs) have billions of parameters and are pretrained on trillions of tokens of input text. They…

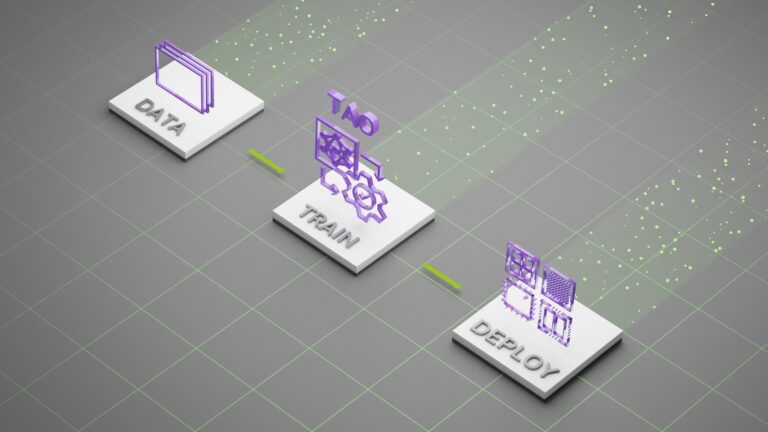

The latest state-of-the-art foundation large language models (LLMs) have billions of parameters and are pretrained on trillions of tokens of input text. They… MediaTek is teaming with NVIDIA to integrate NVIDIA TAO training and pretrained models into its development workflow, bringing advanced AI and visual perception…

MediaTek is teaming with NVIDIA to integrate NVIDIA TAO training and pretrained models into its development workflow, bringing advanced AI and visual perception… NVIDIA RTX Video is a collection of AI video enhancements that improve the visual quality of lower-quality video. RTX Video Super Resolution was announced…

NVIDIA RTX Video is a collection of AI video enhancements that improve the visual quality of lower-quality video. RTX Video Super Resolution was announced… NVIDIA Holoscan is the NVIDIA domain-agnostic multimodal real-time AI sensor processing platform that delivers the foundation for developers to build their…

NVIDIA Holoscan is the NVIDIA domain-agnostic multimodal real-time AI sensor processing platform that delivers the foundation for developers to build their…