Since its introduction, the NVIDIA Hopper architecture has transformed the AI and high-performance computing (HPC) landscape, helping enterprises, researchers and developers tackle the world’s most complex challenges with higher performance and greater energy efficiency. During the Supercomputing 2024 conference, NVIDIA announced the availability of the NVIDIA H200 NVL PCIe GPU — the latest addition to

Read Article

Whether they’re looking at nanoscale electron behaviors or starry galaxies colliding millions of light years away, many scientists share a common challenge — they must comb through petabytes of data to extract insights that can advance their fields. With the NVIDIA cuPyNumeric accelerated computing library, researchers can now take their data-crunching Python code and effortlessly

Read Article

NVIDIA today at SC24 announced two new NVIDIA NIM microservices that can accelerate climate change modeling simulation results by 500x in NVIDIA Earth-2. Earth-2 is a digital twin platform for simulating and visualizing weather and climate conditions. The new NIM microservices offer climate technology application providers advanced generative AI-driven capabilities to assist in forecasting extreme

Read Article

NVIDIA kicked off SC24 in Atlanta with a wave of AI and supercomputing tools set to revolutionize industries like biopharma and climate science. The announcements, delivered by NVIDIA founder and CEO Jensen Huang and Vice President of Accelerated Computing Ian Buck, are rooted in the company’s deep history in transforming computing. “Supercomputers are among humanity’s

Read Article

SC24 — NVIDIA today announced an NVIDIA Omniverse™ Blueprint that enables industry software developers to help their computer-aided engineering (CAE) customers in aerospace, automotive, manufacturing, energy and other industries create digital twins with real-time interactivity.

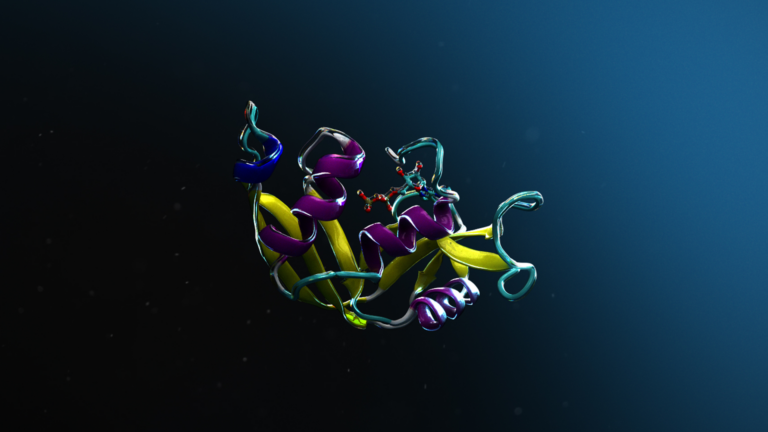

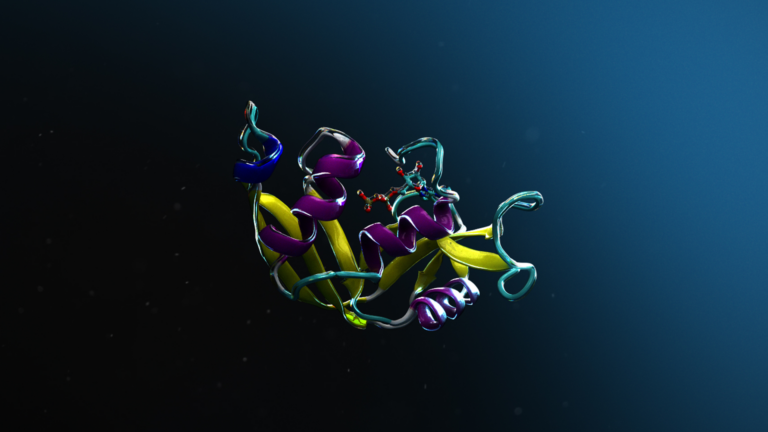

AI models for science are often trained to make predictions about the workings of nature, such as predicting the structure of a biomolecule or the properties of…

AI models for science are often trained to make predictions about the workings of nature, such as predicting the structure of a biomolecule or the properties of…

AI models for science are often trained to make predictions about the workings of nature, such as predicting the structure of a biomolecule or the properties of a new solid that can become the next battery material. These tasks require high precision and accuracy. What makes AI for science even more challenging is that highly accurate and precise scientific data is often scarce…

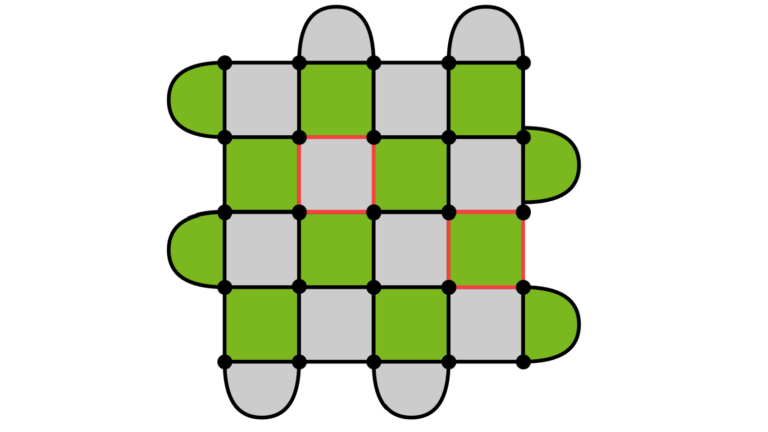

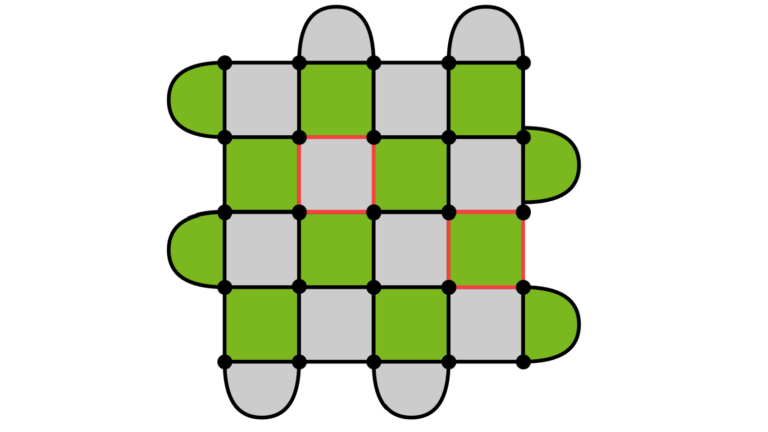

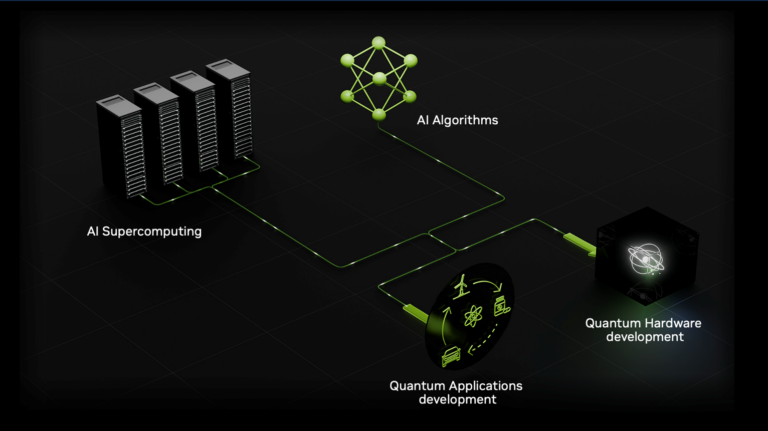

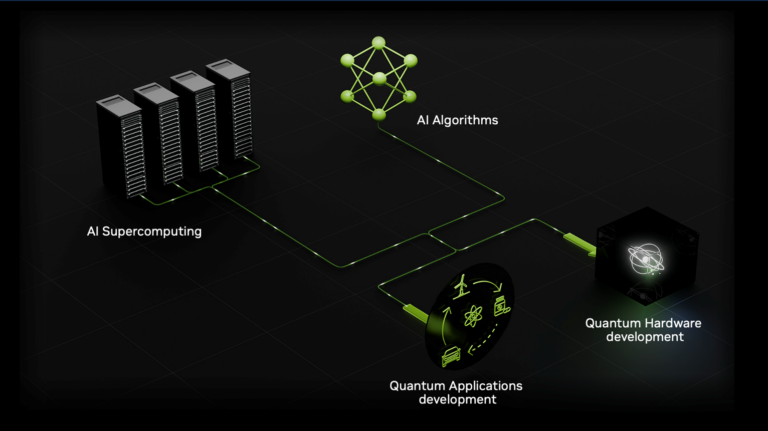

Accelerated quantum supercomputing combines the benefits of AI supercomputing with quantum processing units (QPUs) to develop solutions to some of the world’s…

Accelerated quantum supercomputing combines the benefits of AI supercomputing with quantum processing units (QPUs) to develop solutions to some of the world’s…

Accelerated quantum supercomputing combines the benefits of AI supercomputing with quantum processing units (QPUs) to develop solutions to some of the world’s hardest problems. Realizing such a device involves the seamless integration of one or more QPUs into a traditional CPU and GPU supercomputing architecture. An essential component of any accelerated quantum supercomputer is a programming…

NVIDIA’s vision of accelerated quantum supercomputers integrates quantum hardware and AI supercomputing to turn today’s quantum processors into tomorrow’s…

NVIDIA’s vision of accelerated quantum supercomputers integrates quantum hardware and AI supercomputing to turn today’s quantum processors into tomorrow’s…

NVIDIA’s vision of accelerated quantum supercomputers integrates quantum hardware and AI supercomputing to turn today’s quantum processors into tomorrow’s useful quantum computing devices. At Supercomputing 2024 (SC24), NVIDIA announced a wave of projects with partners that are driving the quantum ecosystem through those challenges standing between today’s technologies and this accelerated…

Everything that is manufactured is first simulated with advanced physics solvers. Real-time digital twins (RTDTs) are the cutting edge of computer-aided…

Everything that is manufactured is first simulated with advanced physics solvers. Real-time digital twins (RTDTs) are the cutting edge of computer-aided…

Everything that is manufactured is first simulated with advanced physics solvers. Real-time digital twins (RTDTs) are the cutting edge of computer-aided engineering (CAE) simulation, because they enable immediate feedback in the engineering design loop. They empower engineers to innovate freely and rapidly explore new designs by experiencing in real time the effects of any change in the simulation.

AI has proven to be a force multiplier, helping to create a future where scientists can design entirely new materials, while engineers seamlessly transform…

AI has proven to be a force multiplier, helping to create a future where scientists can design entirely new materials, while engineers seamlessly transform…

AI has proven to be a force multiplier, helping to create a future where scientists can design entirely new materials, while engineers seamlessly transform these designs into production plans—all without ever setting foot in a lab. As AI continues to redefine the boundaries of innovation, this once elusive vision is now more within reach. Recognizing this paradigm shift…