The advent of large language models (LLMs) marks a significant shift in how industries leverage AI to enhance operations and services. By automating routine…

The advent of large language models (LLMs) marks a significant shift in how industries leverage AI to enhance operations and services. By automating routine…

The advent of large language models (LLMs) marks a significant shift in how industries leverage AI to enhance operations and services. By automating routine tasks and streamlining processes, LLMs free up human resources for more strategic endeavors, thus improving overall efficiency and productivity. Training and customizing LLMs for high accuracy is fraught with challenges…

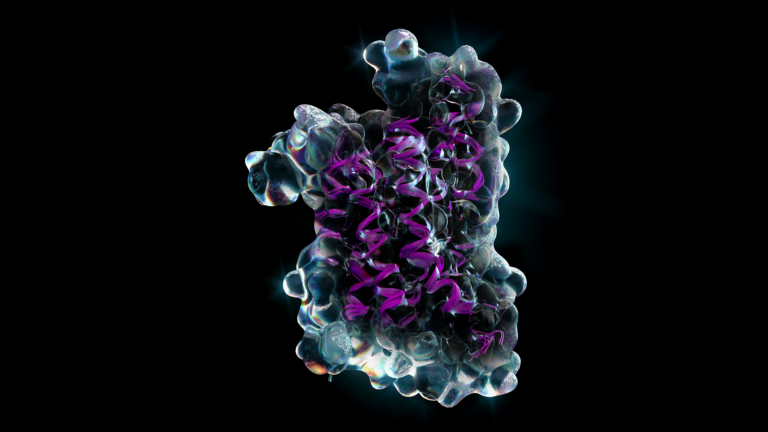

The ability to compare the sequences of multiple related proteins is a foundational task for many life science researchers. This is often done in the form of a…

The ability to compare the sequences of multiple related proteins is a foundational task for many life science researchers. This is often done in the form of a… As models grow larger and are trained on more data, they become more capable, making them more useful. To train these models quickly, more performance,…

As models grow larger and are trained on more data, they become more capable, making them more useful. To train these models quickly, more performance,…