Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

Discover how to detect cyber threats using machine learning and NVIDIA Morpheus, an open-source AI framework.

3D content creators are clamoring for NVIDIA Instant NeRF, an inverse rendering tool that turns a set of static images into a realistic 3D scene. Since its debut earlier this year, tens of thousands of developers around the world have downloaded the source code and used it to render spectacular scenes, sharing eye-catching results on Read article >

The post NVIDIA Instant NeRF Wins Best Paper at SIGGRAPH, Inspires Creative Wave Amid Tens of Thousands of Downloads appeared first on NVIDIA Blog.

The Acceleration Agency, a digital innovation and product design firm, is working on an active digital twin framework and toolkit called Project Gemini….

The Acceleration Agency, a digital innovation and product design firm, is working on an active digital twin framework and toolkit called Project Gemini….

The Acceleration Agency, a digital innovation and product design firm, is working on an active digital twin framework and toolkit called Project Gemini. Inspired by the United States space program of the same name, Project Gemini uses active sensor fabric data and a wide range of data from sources like Google Sheets and Customer Relationship Management (CRM) platforms to replicate real-world settings in the virtual world.

The active digital twin framework and toolkit will be fully connected to NVIDIA Omniverse–a scalable platform for design and collaboration–using Universal Scene Description (USD).

The project launched with a digital replication of The Acceleration Agency’s main office located in Austin, Texas. Instrumented with a dense sensor fabric for real-time and historical spatial computation, the digital twin of the office includes employees and employee information (job title, ID#, gender, and date of birth) provided by Salesforce. It also tracks inventory items on site and can display information such as quantity, date of last interaction, temperature, and orientation.

With NVIDIA Omniverse real time, true-to-reality physics from PhysX, and physically accurate RTX rendering capabilities, the team anticipates that the Gemini active digital twin can be simulated with an unprecedented level of visual and physical fidelity and with complex simulations.

Leveraging USD and Omniverse Nucleus, users of the Project Gemini digital twin platform will be able to update content in a variety of tools in real time collaboratively instead of having to wait for new builds.

Multiple abstraction layers and a sensor fabric layer allow a variety of sensors, databases, CRMs and object integration tools to connect to Omniverse. The connection allows real-time updates to inventory objects and information like temperature, humidity, and location.

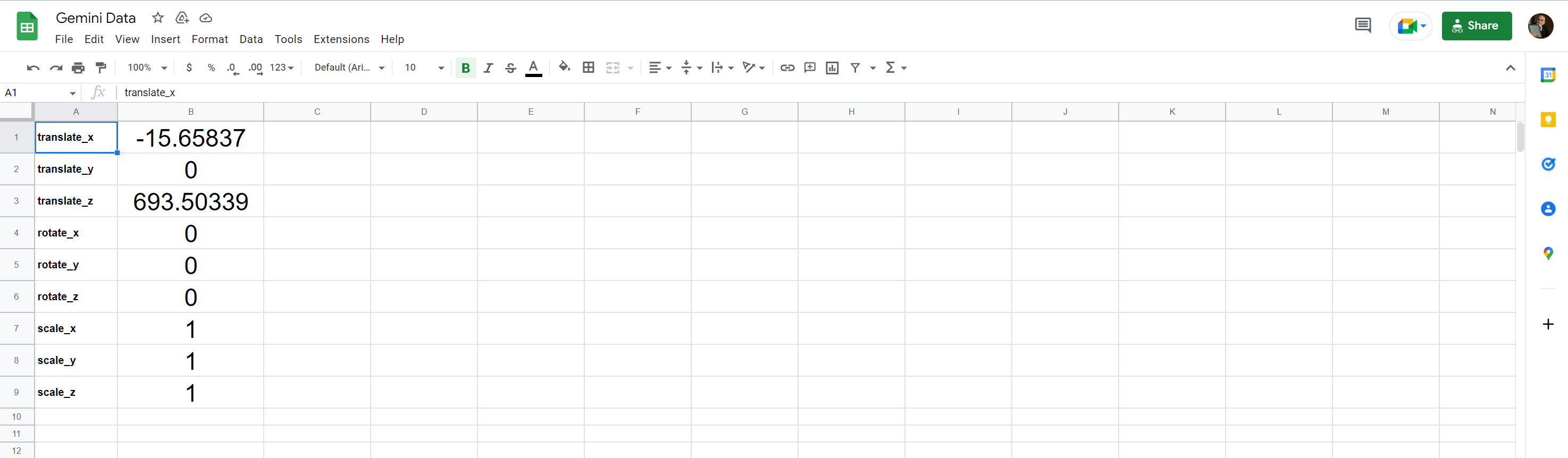

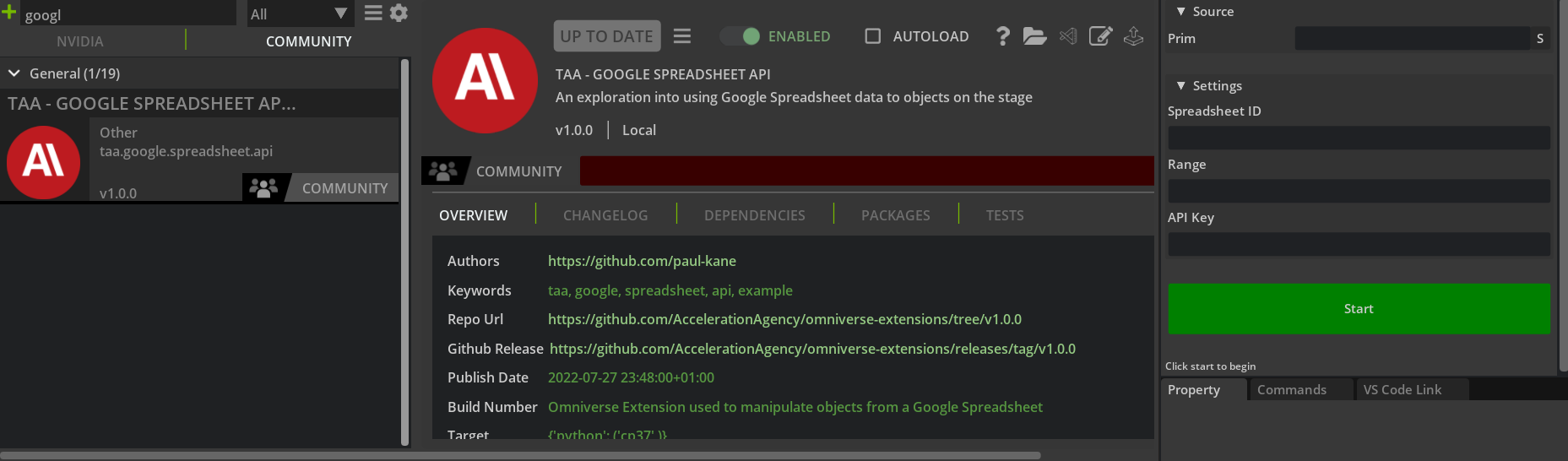

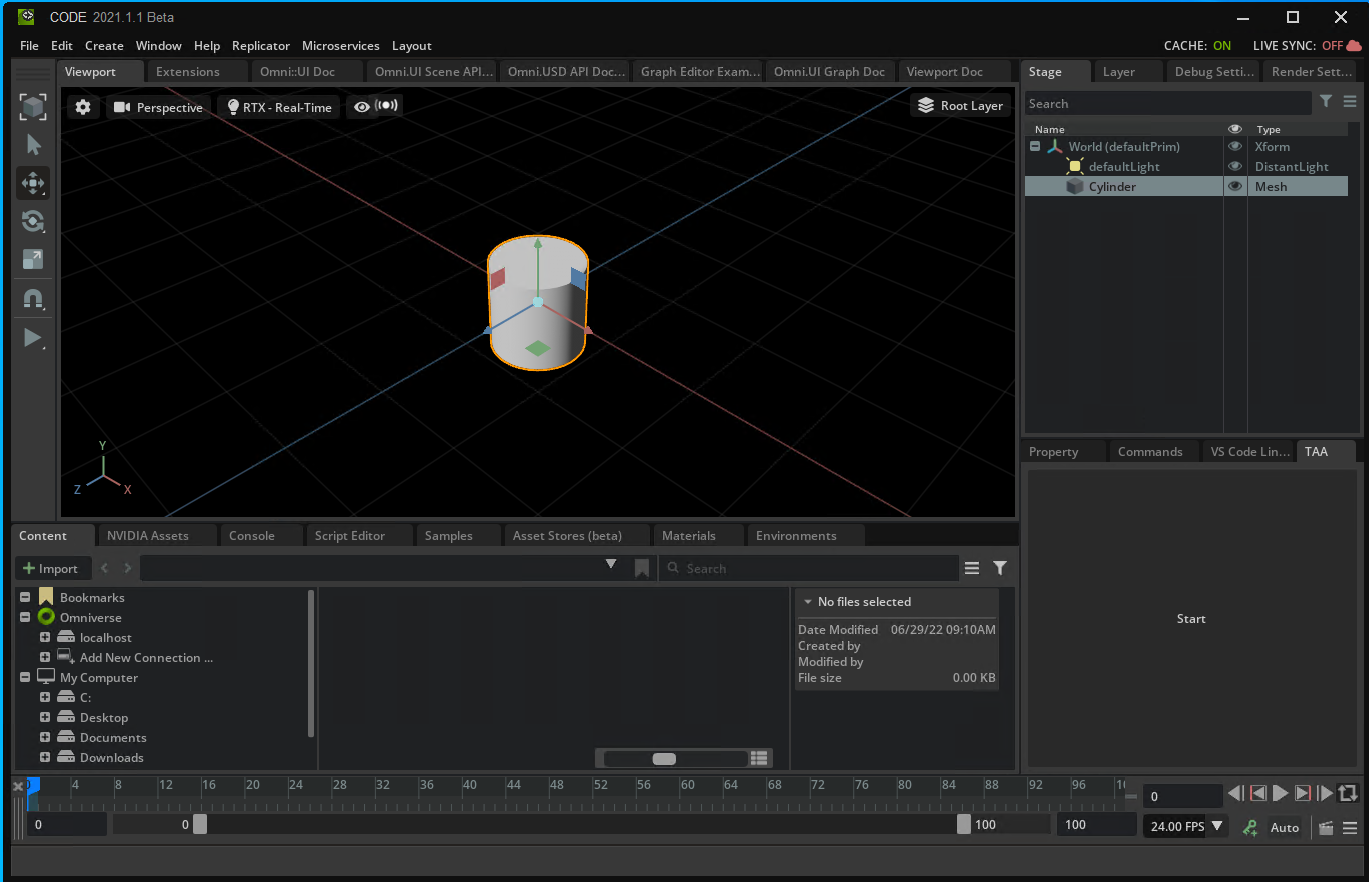

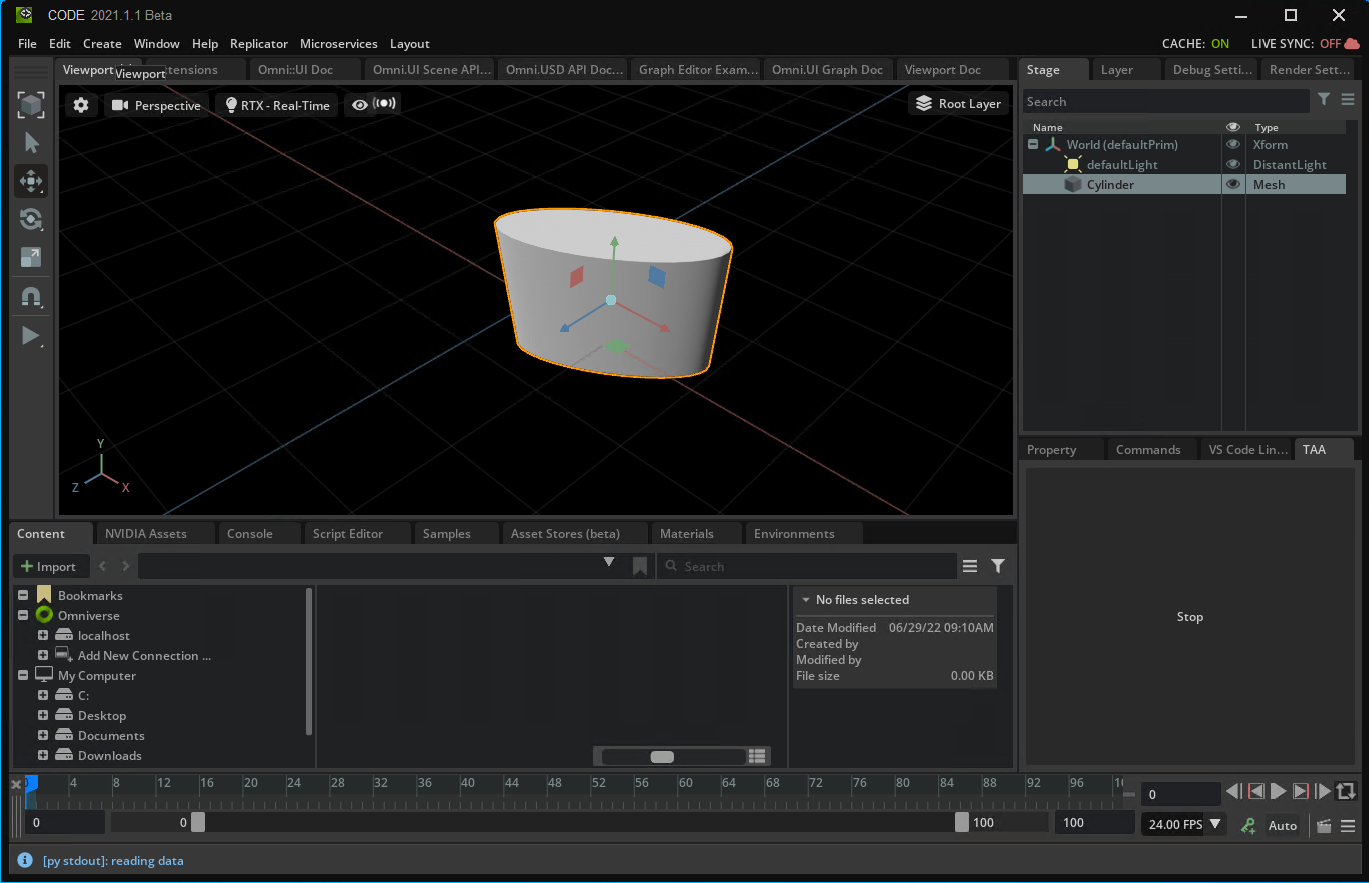

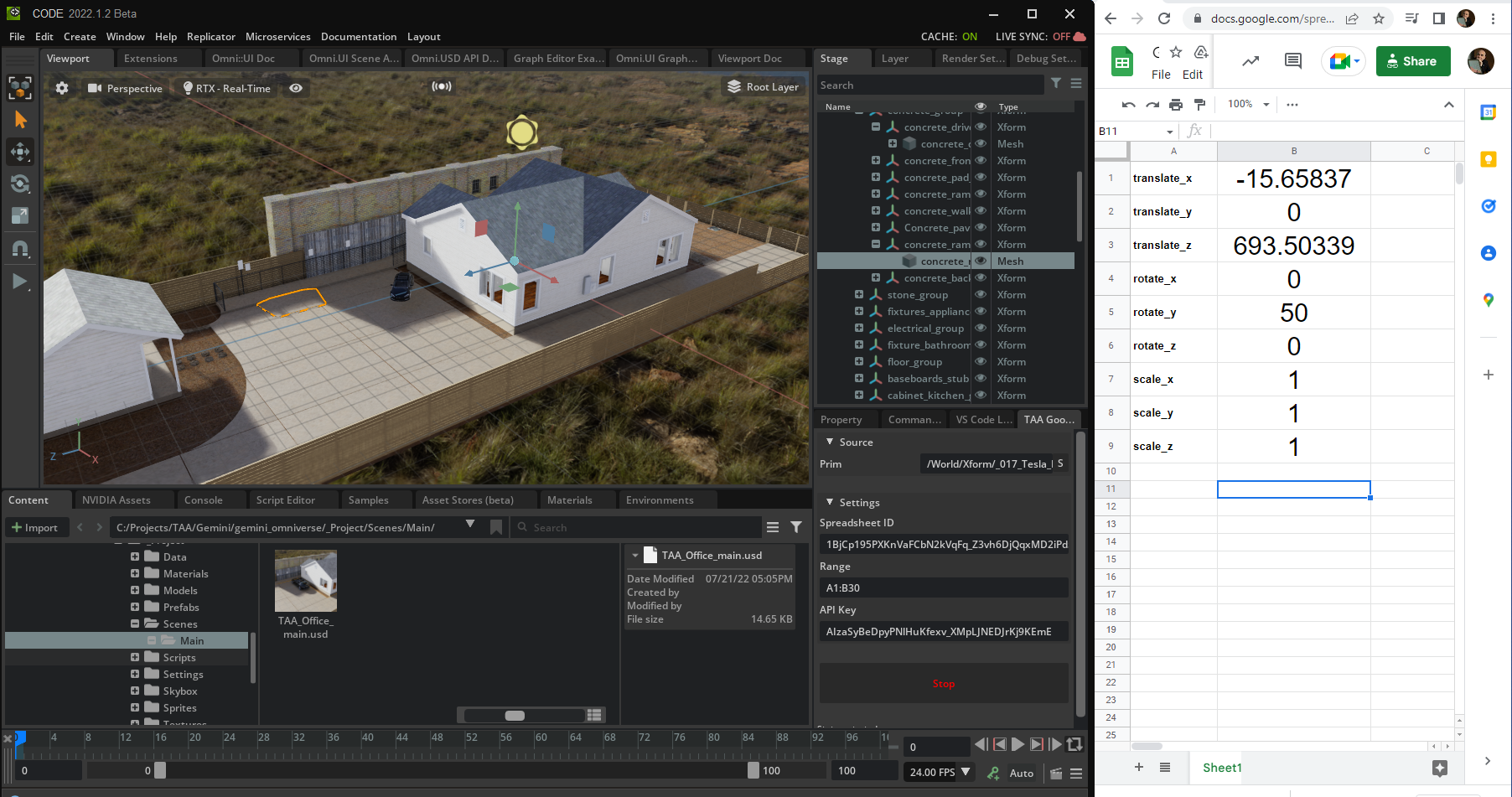

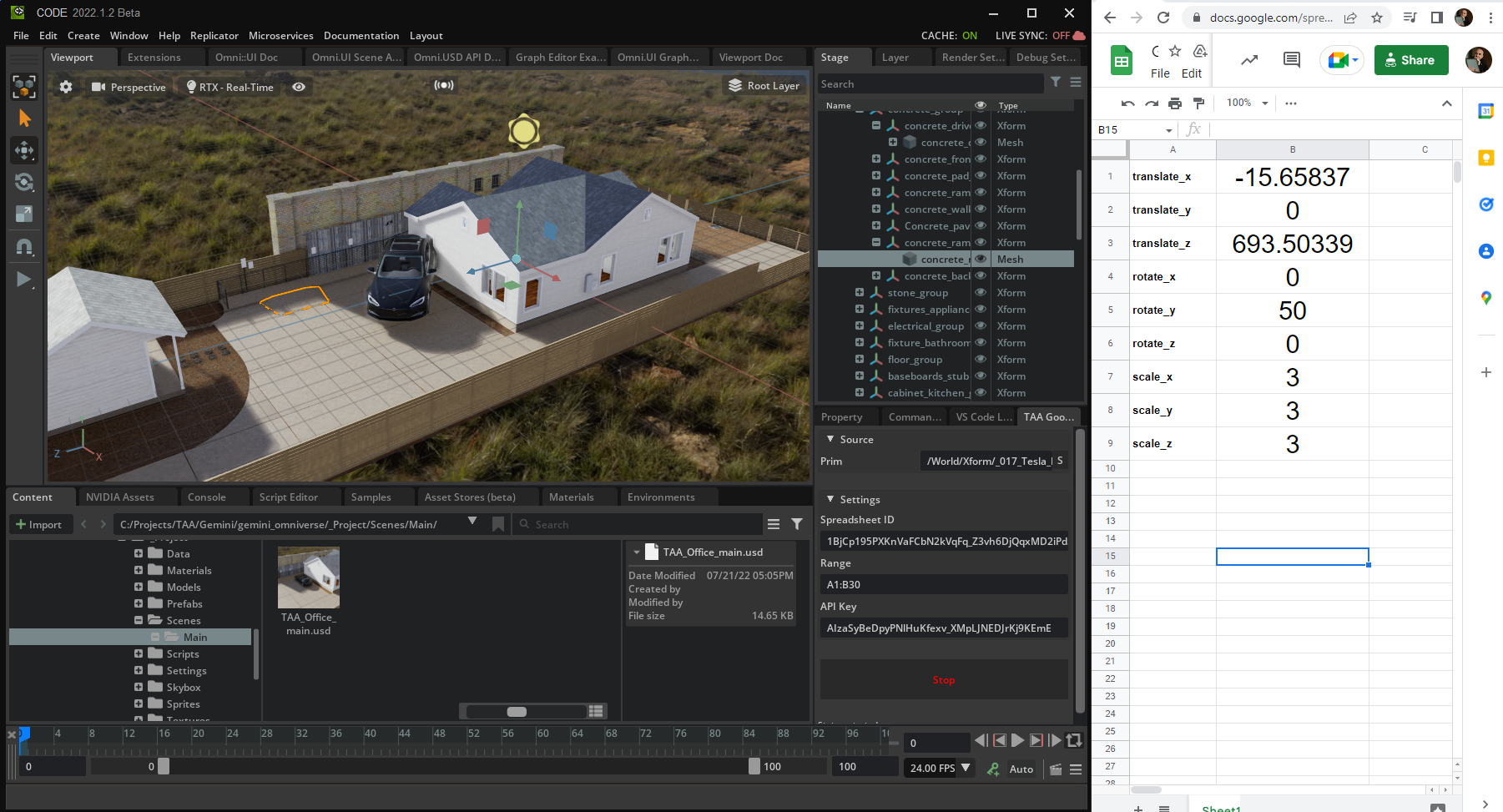

To accomplish this, the team created a simple Omniverse Kit Extension enabled by a Python script that reads data from a Google Sheet and attaches the data to an object in Omniverse Kit. It allows someone to control the location, scale, and rotation of any selected object in Omniverse applications like Omniverse Code or Omniverse Create using the metadata in the spreadsheet. You can access the AccelerationAgency/omniverse-extensions through GitHub.

Using database and CRM tools with the extension makes the task of manipulating object data more scalable. When building digital twins at the scale of factories, stadiums, warehouses, and even cities, hundreds, thousands, and even millions of objects may need to be manipulated rapidly.

The Acceleration Agency loaded the USD version of their office digital twin into the Omniverse stage and used the extension to select and manipulate object data.

The images below show an example of how this process was done for a Tesla in the parking lot outside the agency office. Building this was fairly straightforward and only took a few days for a single developer to create. It can be extended to any data source.

Watch the extension in action with Starr Long, Executive Producer at The Acceleration Agency:

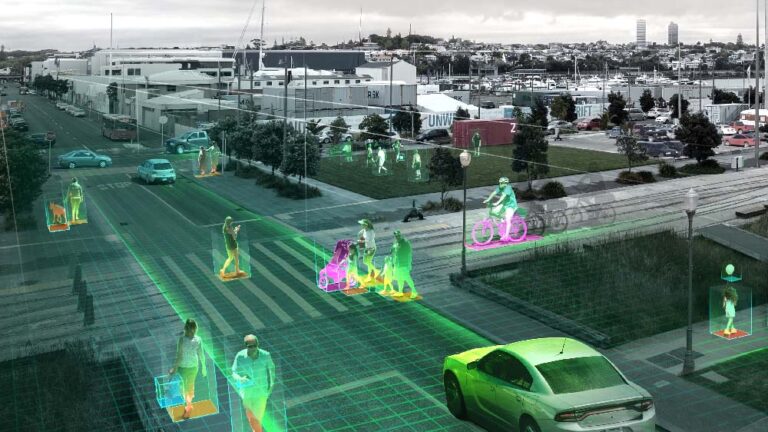

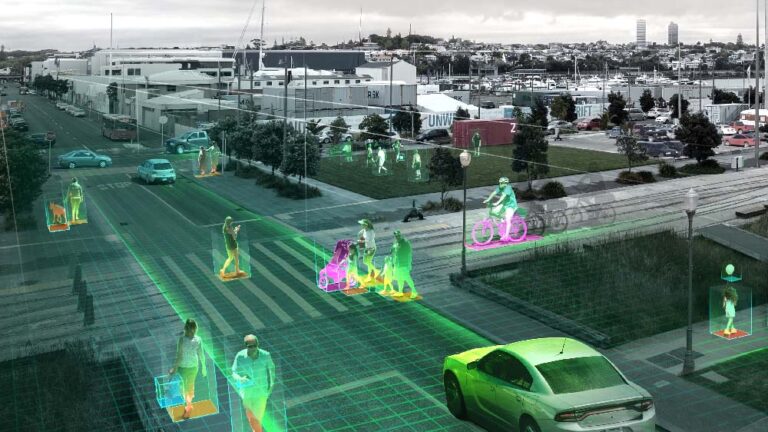

The next step for Project Gemini is to render in real time with the NVIDIA RTX Renderer and allow for real-time modifications through Nucleus. The real-time modifications are one of the advantages of working with the powerful USD 3D framework and composition engine. This will be coupled with historical recordings of real data which when played back can be mixed with these modifications to try different scenarios. Some of the use cases the team is targeting include construction sites, hospitals, and live event venues. To learn more, visit the Project Gemini website.

Learn more about building custom USD-based applications and extensions for NVIDIA Omniverse in the Omniverse Resource Center and with these USD-specific resources.

Don’t miss NVIDIA at SIGGRAPH, August 8-11, 2022. Watch the Omniverse community livestream at SIGGRAPH on August 9 at noon, Pacific time, to learn how NVIDIA Omniverse and other design and visualization solutions are driving breakthroughs in graphics and GPU-accelerated software.

You’re also invited to enter the inaugural #ExtendOmniverse developer contest, open through August 19, 2022. Create an Omniverse Extension using Omniverse Code for a chance to win an NVIDIA RTX GPU.

Follow NVIDIA Omniverse on Instagram, Twitter, YouTube and Medium for additional resources and inspiration. Check out the Omniverse forums, and join our Discord server and Twitch channel to chat with the community.

Join us September 19-22 for a deep dive into the latest advances in edge AI, from reimagined shopping experiences to industrial automation.

Join us September 19-22 for a deep dive into the latest advances in edge AI, from reimagined shopping experiences to industrial automation.

Join us September 19-22 for a deep dive into the latest advances in edge AI, from reimagined shopping experiences to industrial automation.

Innovative technologies in AI, virtual worlds and digital humans are shaping the future of design and content creation across every industry. Experience the latest advances from NVIDIA in all these areas at SIGGRAPH, the world’s largest gathering of computer graphics experts, running Aug. 8-11. At the conference, creators, developers, engineers, researchers and students will see Read article >

The post Dive Into AI, Avatars and the Metaverse With NVIDIA at SIGGRAPH appeared first on NVIDIA Blog.

Computer vision models see daily application for a wide variety of tasks, ranging from object recognition to image-based 3D object reconstruction. One challenging type of computer vision problem is instance-level recognition (ILR) — given an image of an object, the task is to not only determine the generic category of an object (e.g., an arch), but also the specific instance of the object (”Arc de Triomphe de l’Étoile, Paris, France”).

Previously, ILR was tackled using deep learning approaches. First, a large set of images was collected. Then a deep model was trained to embed each image into a high-dimensional space where similar images have similar representations. Finally, the representation was used to solve the ILR tasks related to classification (e.g., with a shallow classifier trained on top of the embedding) or retrieval (e.g., with a nearest neighbor search in the embedding space).

Since there are many different object domains in the world, e.g., landmarks, products, or artworks, capturing all of them in a single dataset and training a model that can distinguish between them is quite a challenging task. To decrease the complexity of the problem to a manageable level, the focus of research so far has been to solve ILR for a single domain at a time. To advance the research in this area, we hosted multiple Kaggle competitions focused on the recognition and retrieval of landmark images. In 2020, Amazon joined the effort and we moved beyond the landmark domain and expanded to the domains of artwork and product instance recognition. The next step is to generalize the ILR task to multiple domains.

To this end, we’re excited to announce the Google Universal Image Embedding Challenge, hosted by Kaggle in collaboration with Google Research and Google Lens. In this challenge, we ask participants to build a single universal image embedding model capable of representing objects from multiple domains at the instance level. We believe that this is the key for real-world visual search applications, such as augmenting cultural exhibits in a museum, organizing photo collections, visual commerce and more.

|

| Images1 of object instances coming from multiple domains, which are represented in our dataset: apparel and accessories, packaged goods, furniture and home goods, toys, cars, landmarks, storefronts, dishes, artwork, memes and illustrations. |

Degrees of Variation in Different Domains

To represent objects from a large number of domains, we require one model to learn many domain-specific subtasks (e.g., filtering different kinds of noise or focusing on a specific detail), which can only be learned from a semantically and visually diverse collection of images. Addressing each degree of variation proposes a new challenge for both image collection and model training.

The first sort of variation comes from the fact that while some domains contain unique objects in the world (landmarks, artwork, etc.), others contain objects that may have many copies (clothing, furniture, packaged goods, food, etc.). Because a landmark is always placed at the same location, the surrounding context may be useful for recognition. In contrast, a product, say a phone, even of a specific model and color, may have millions of physical instances and thus appear in many surrounding contexts.

Another challenge comes from the fact that a single object may appear different depending on the point of view, lighting conditions, occlusion or deformations (e.g., a dress worn on a person may look very different than on a hanger). In order for a model to learn invariance to all of these visual modes, all of them should be captured by the training data.

Additionally, similarities between objects differ across domains. For example, in order for a representation to be useful in the product domain, it must be able to distinguish very fine-grained details between similarly looking products belonging to two different brands. In the domain of food, however, the same dish (e.g., spaghetti bolognese) cooked by two chefs may look quite different, but the ability of the model to distinguish spaghetti bolognese from other dishes may be sufficient for the model to be useful. Additionally, a vision model of high quality should assign similar representations to more visually similar renditions of a dish.

<!–

–><!–

–>

| Domain | Landmark | Apparel | ||||

| Image | .jpg) |

.jpg) |

||||

| Instance Name | Empire State Building2 | Cycling jerseys with Android logo3 | ||||

| Which physical objects belong to the instance class? | Single instance in the world | Many physical instances; may differ in size or pattern (e.g., a patterned cloth cut differently) | ||||

| What are the possible views of the object? | Appearance variation only based on capture conditions (e.g., illumination or viewpoint); limited number of common external views; possibility of many internal views | Deformable appearance (e.g., worn or not); limited number of common views: front, back, side | ||||

| What are the surroundings and are they useful for recognition? | Surrounding context does not vary much other than daily and yearly cycles; may be useful for verifying the object of interest | Surrounding context can change dramatically due to difference in environment, additional pieces of clothing, or accessories partially occluding clothing of interest (e.g., a jacket or a scarf) | ||||

| What may be tricky cases that do not belong to the instance class? | Replicas of landmarks (e.g., Eiffel Tower in Las Vegas), souvenirs | Same piece of apparel of different material or different color; visually very similar pieces with a small distinguishing detail (e.g., a small brand logo); different pieces of apparel worn by the same model | ||||

| Variation among domains for landmark and apparel examples. |

Learning Multi-domain Representations

After a collection of images covering a variety of domains is created, the next challenge is to train a single, universal model. Some features and tasks, such as representing color, are useful across many domains, and thus adding training data from any domain will likely help the model improve at distinguishing colors. Other features may be more specific to selected domains, thus adding more training data from other domains may deteriorate the model’s performance. For example, while for 2D artwork it may be very useful for the model to learn to find near duplicates, this may deteriorate the performance on clothing, where deformed and occluded instances need to be recognized.

The large variety of possible input objects and tasks that need to be learned require novel approaches for selecting, augmenting, cleaning and weighing the training data. New approaches for model training and tuning, and even novel architectures may be required.

Universal Image Embedding Challenge

To help motivate the research community to address these challenges, we are hosting the Google Universal Image Embedding Challenge. The challenge was launched on Kaggle in July and will be open until October, with cash prizes totaling $50k. The winning teams will be invited to present their methods at the Instance-Level Recognition workshop at ECCV 2022.

Participants will be evaluated on a retrieval task on a dataset of ~5,000 test query images and ~200,000 index images, from which similar images are retrieved. In contrast to ImageNet, which includes categorical labels, the images in this dataset are labeled at the instance level.

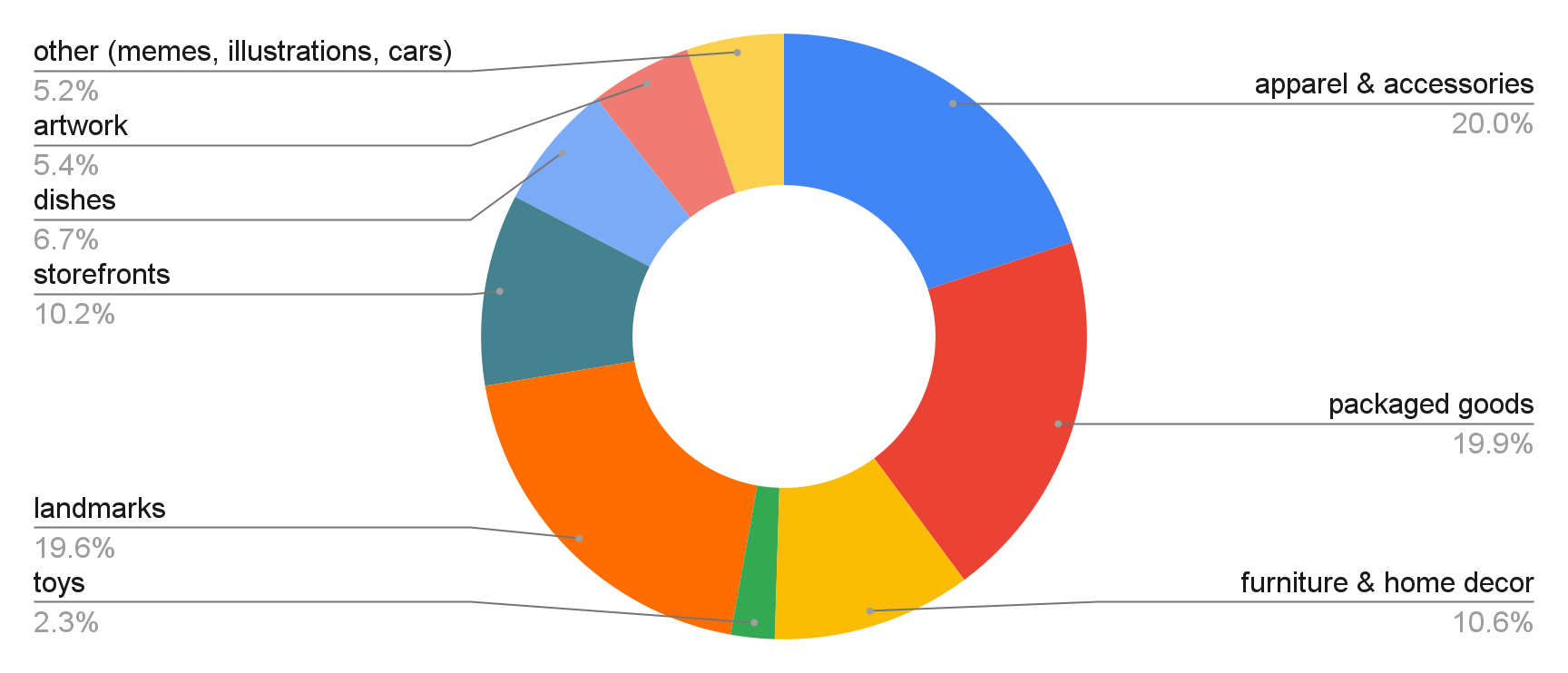

The evaluation data for the challenge is composed of images from the following domains: apparel and accessories, packaged goods, furniture and home goods, toys, cars, landmarks, storefronts, dishes, artwork, memes and illustrations.

|

| Distribution of domains of query images. |

We invite researchers and machine learning enthusiasts to participate in the Google Universal Image Embedding Challenge and join the Instance-Level Recognition workshop at ECCV 2022. We hope the challenge and the workshop will advance state-of-the-art techniques on multi-domain representations.

Acknowledgement

The core contributors to this project are Andre Araujo, Boris Bluntschli, Bingyi Cao, Kaifeng Chen, Mário Lipovský, Grzegorz Makosa, Mojtaba Seyedhosseini and Pelin Dogan Schönberger. We would like to thank Sohier Dane, Will Cukierski and Maggie Demkin for their help organizing the Kaggle challenge, as well as our ECCV workshop co-organizers Tobias Weyand, Bohyung Han, Shih-Fu Chang, Ondrej Chum, Torsten Sattler, Giorgos Tolias, Xu Zhang, Noa Garcia, Guangxing Han, Pradeep Natarajan and Sanqiang Zhao. Furthermore we are thankful to Igor Bonaci, Tom Duerig, Vittorio Ferrari, Victor Gomes, Futang Peng and Howard Zhou who gave us feedback, ideas and support at various points of this project.

1 Image credits: Chris Schrier, CC-BY; Petri Krohn, GNU Free Documentation License; Drazen Nesic, CC0; Marco Verch Professional Photographer, CCBY; Grendelkhan, CCBY; Bobby Mikul, CC0; Vincent Van Gogh, CC0; pxhere.com, CC0; Smart Home Perfected, CC-BY. ↩

2 Image credit: Bobby Mikul, CC0. ↩

3 Image credit: Chris Schrier, CC-BY. ↩

Pinterest has engineered a way to serve its photo-sharing community more of the images they love. The social-image service, with more than 400 million monthly active users, has trained bigger recommender models for improved accuracy at predicting people’s interests. Pinterest handles hundreds of millions of user requests an hour on any given day. And it Read article >

The post Pinterest Boosts Home Feed Engagement 16% With Switch to GPU Acceleration of Recommenders appeared first on NVIDIA Blog.

Imagine hiking to a lake on a summer day — sitting under a shady tree and watching the water gleam under the sun. In this scene, the differences between light and shadow are examples of direct and indirect lighting. The sun shines onto the lake and the trees, making the water look like it’s shimmering Read article >

The post What Is Direct and Indirect Lighting? appeared first on NVIDIA Blog.

It’s the first GFN Thursday of the month and you know the drill — GeForce NOW is bringing a big batch of games to the cloud. Get ready for 38 exciting titles like Saints Row and Rumbleverse arriving on the GeForce NOW library in August. Members can kick off the month streaming 13 new games Read article >

The post Rush Into August This GFN Thursday With 38 New Games on GeForce NOW appeared first on NVIDIA Blog.

Deep learning models for visual tasks (e.g., image classification) are usually trained end-to-end with data from a single visual domain (e.g., natural images or computer generated images). Typically, an application that completes visual tasks for multiple domains would need to build multiple models for each individual domain, train them independently (meaning no data is shared between domains), and then at inference time each model would process domain-specific input data. However, early layers between these models generate similar features, even for different domains, so it can be more efficient — decreasing latency and power consumption, lower memory overhead to store parameters of each model — to jointly train multiple domains, an approach referred to as multi-domain learning (MDL). Moreover, an MDL model can also outperform single domain models due to positive knowledge transfer, which is when additional training on one domain actually improves performance for another. The opposite, negative knowledge transfer, can also occur, depending on the approach and specific combination of domains involved. While previous work on MDL has proven the effectiveness of jointly learning tasks across multiple domains, it involved a hand-crafted model architecture that is inefficient to apply to other work.

In “Multi-path Neural Networks for On-device Multi-domain Visual Classification”, we propose a general MDL model that can: 1) achieve high accuracy efficiently (keeping the number of parameters and FLOPS low), 2) learn to enhance positive knowledge transfer while mitigating negative transfer, and 3) effectively optimize the joint model while handling various domain-specific difficulties. As such, we propose a multi-path neural architecture search (MPNAS) approach to build a unified model with heterogeneous network architecture for multiple domains. MPNAS extends the efficient neural architecture search (NAS) approach from single path search to multi-path search by finding an optimal path for each domain jointly. Also, we introduce a new loss function, called adaptive balanced domain prioritization (ABDP) that adapts to domain-specific difficulties to help train the model efficiently. The resulting MPNAS approach is efficient and scalable; the resulting model maintains performance while reducing the model size and FLOPS by 78% and 32%, respectively, compared to a single-domain approach.

Multi-Path Neural Architecture Search

To encourage positive knowledge transfer and avoid negative transfer, traditional solutions build an MDL model so that domains share most of the layers that learn the shared features across domains (called feature extraction), then have a few domain-specific layers on top. However, such a homogenous approach to feature extraction cannot handle domains with significantly different features (e.g., objects in natural images and art paintings). On the other hand, handcrafting a unified heterogeneous architecture for each MDL model is time-consuming and requires domain-specific knowledge.

NAS is a powerful paradigm for automatically designing deep learning architectures. It defines a search space, made up of various potential building blocks that could be part of the final model. The search algorithm finds the best candidate architecture from the search space that optimizes the model objectives, e.g., classification accuracy. Recent NAS approaches (e.g., TuNAS) have meaningfully improved search efficiency by using end-to-end path sampling, which enables us to scale NAS from single domains to MDL.

Inspired by TuNAS, MPNAS builds the MDL model architecture in two stages: search and training. In the search stage, to find an optimal path for each domain jointly, MPNAS creates an individual reinforcement learning (RL) controller for each domain, which samples an end-to-end path (from input layer to output layer) from the supernetwork (i.e., the superset of all the possible subnetworks between the candidate nodes defined by the search space). Over multiple iterations, all the RL controllers update the path to optimize the RL rewards across all domains. At the end of the search stage, we obtain a subnetwork for each domain. Finally, all the subnetworks are combined to build a heterogeneous architecture for the MDL model, shown below.

Since the subnetwork for each domain is searched independently, the building block in each layer can be shared by multiple domains (i.e., dark gray nodes), used by a single domain (i.e., light gray nodes), or not used by any subnetwork (i.e., dotted nodes). The path for each domain can also skip any layer during search. Given the subnetwork can freely select which blocks to use along the path in a way that optimizes performance (rather than, e.g., arbitrarily designating which layers are homogenous and which are domain-specific), the output network is both heterogeneous and efficient.

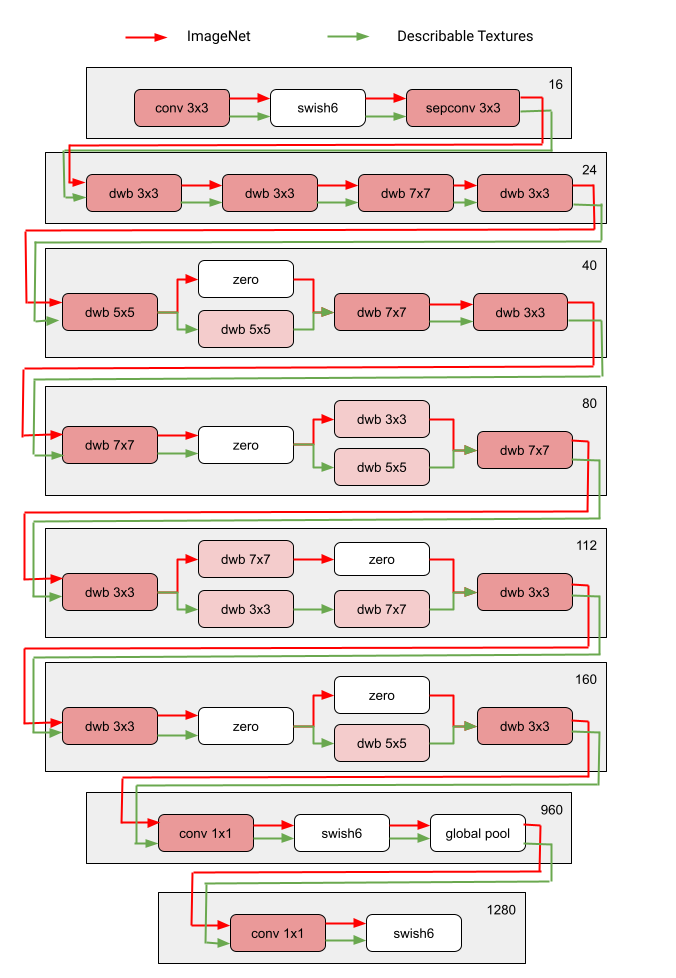

The figure below demonstrates the searched architecture of two visual domains among the ten domains of the Visual Domain Decathlon challenge. One can see that the subnetwork of these two highly related domains (one red, the other green) share a majority of building blocks from their overlapping paths, but there are still some differences.

|

| Architecture blocks of two domains (ImageNet and Describable Textures) among the ten domains of the Visual Domain Decathlon challenge. Red and green path represents the subnetwork of ImageNet and Describable Textures, respectively. Dark pink nodes represent the blocks shared by multiple domains. Light pink nodes represent the blocks used by each path. The model is built based on MobileNet V3-like search space. The “dwb” block in the figure represents the dwbottleneck block. The “zero” block in the figure indicates the subnetwork skips that block. |

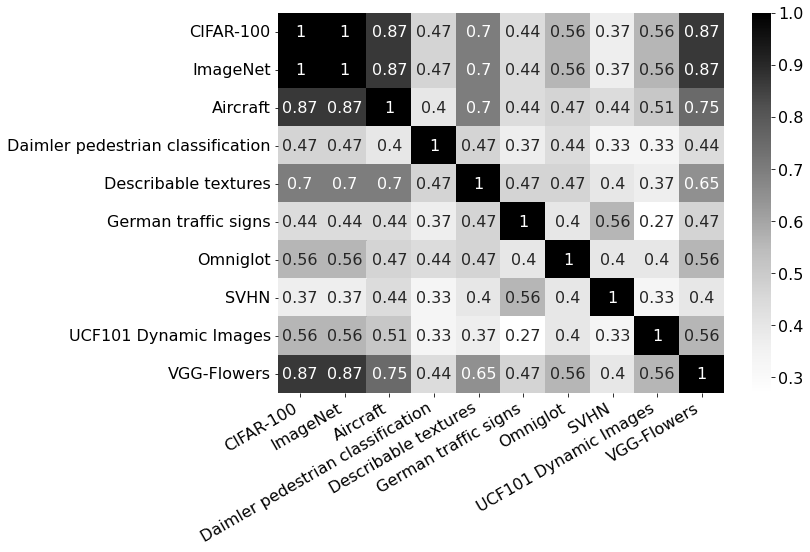

Below we show the path similarity between domains among the ten domains of the Visual Domain Decathlon challenge. The similarity is measured by the Jaccard similarity score between the subnetworks of each domain, where higher means the paths are more similar. As one might expect, domains that are more similar share more nodes in the paths generated by MPNAS, which is also a signal of strong positive knowledge transfer. For example, the paths for similar domains (like ImageNet, CIFAR-100, and VGG Flower, which all include objects in natural images) have high scores, while the paths for dissimilar domains (like Daimler Pedestrian Classification and UCF101 Dynamic Images, which include pedestrians in grayscale images and human activity in natural color images, respectively) have low scores.

|

| Confusion matrix for the Jaccard similarity score between the paths for the ten domains. Score value ranges from 0 to 1. A greater value indicates two paths share more nodes. |

Training a Heterogeneous Multi-domain Model

In the second stage, the model resulting from MPNAS is trained from scratch for all domains. For this to work, it is necessary to define a unified objective function for all the domains. To successfully handle a large variety of domains, we designed an algorithm that adapts throughout the learning process such that losses are balanced across domains, called adaptive balanced domain prioritization (ABDP).

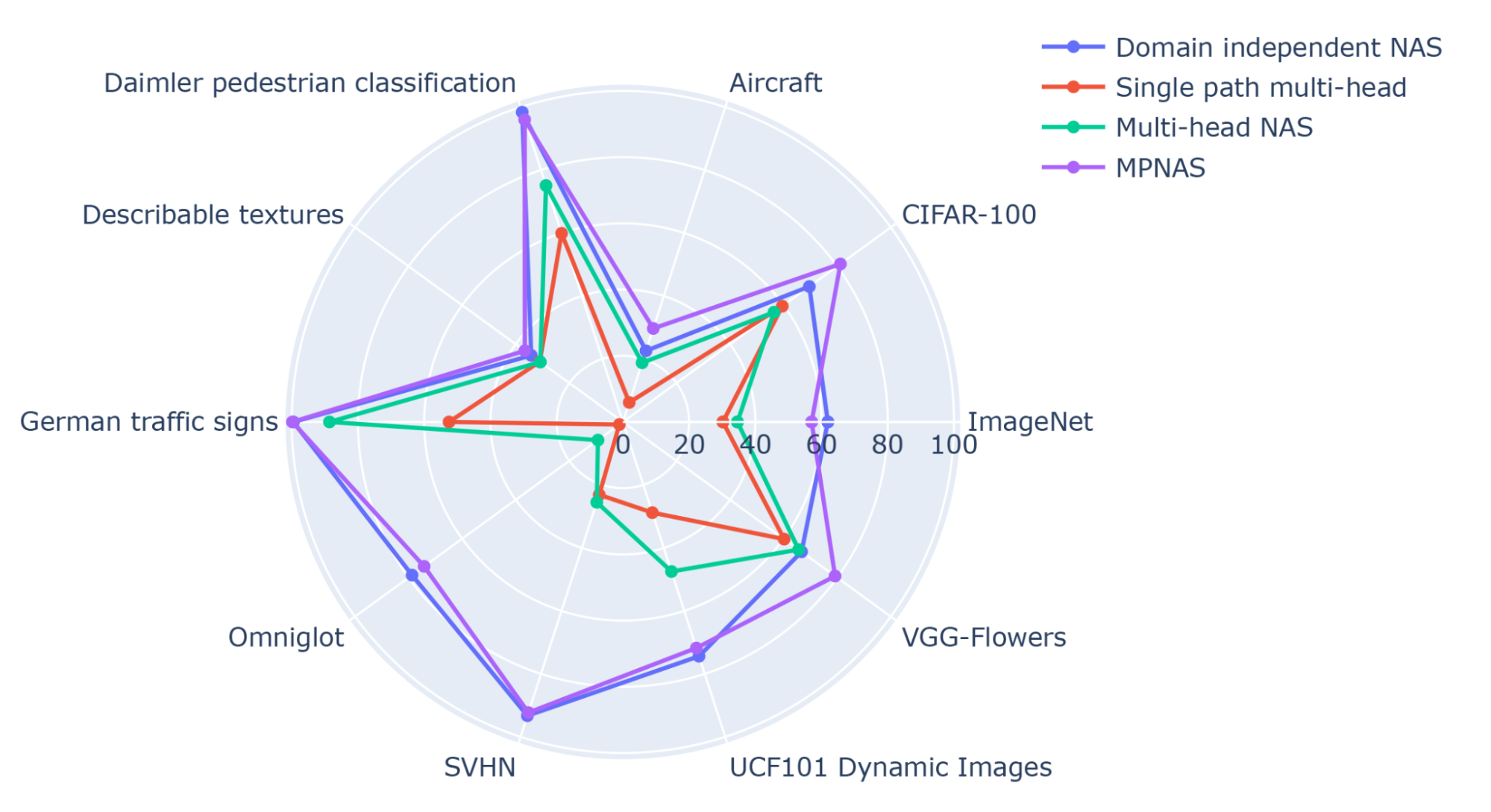

Below we show the accuracy, model size, and FLOPS of the model trained in different settings. We compare MPNAS to three other approaches:

From the results, we can observe that domain independent NAS requires building a bundle of models for each domain, resulting in a large model size. Although single path multi-head and multi-head NAS can reduce the model size and FLOPS significantly, forcing the domains to share the same backbone introduces negative knowledge transfer, decreasing overall accuracy.

| Model | Number of parameters ratio | GFLOPS | Average Top-1 accuracy |

| Domain independent NAS | 5.7x | 1.08 | 69.9 |

| Single path multi-head | 1.0x | 0.09 | 35.2 |

| Multi-head NAS | 0.7x | 0.04 | 45.2 |

| MPNAS | 1.3x | 0.73 | 71.8 |

| Number of parameters, gigaFLOPS, and Top-1 accuracy (%) of MDL models on the Visual Decathlon dataset. All methods are built based on the MobileNetV3-like search space. |

MPNAS can build a small and efficient model while still maintaining high overall accuracy. The average accuracy of MPNAS is even 1.9% higher than the domain independent NAS approach since the model enables positive knowledge transfer. The figure below compares per domain top-1 accuracy of these approaches.

|

| Top-1 accuracy of each Visual Decathlon domain. |

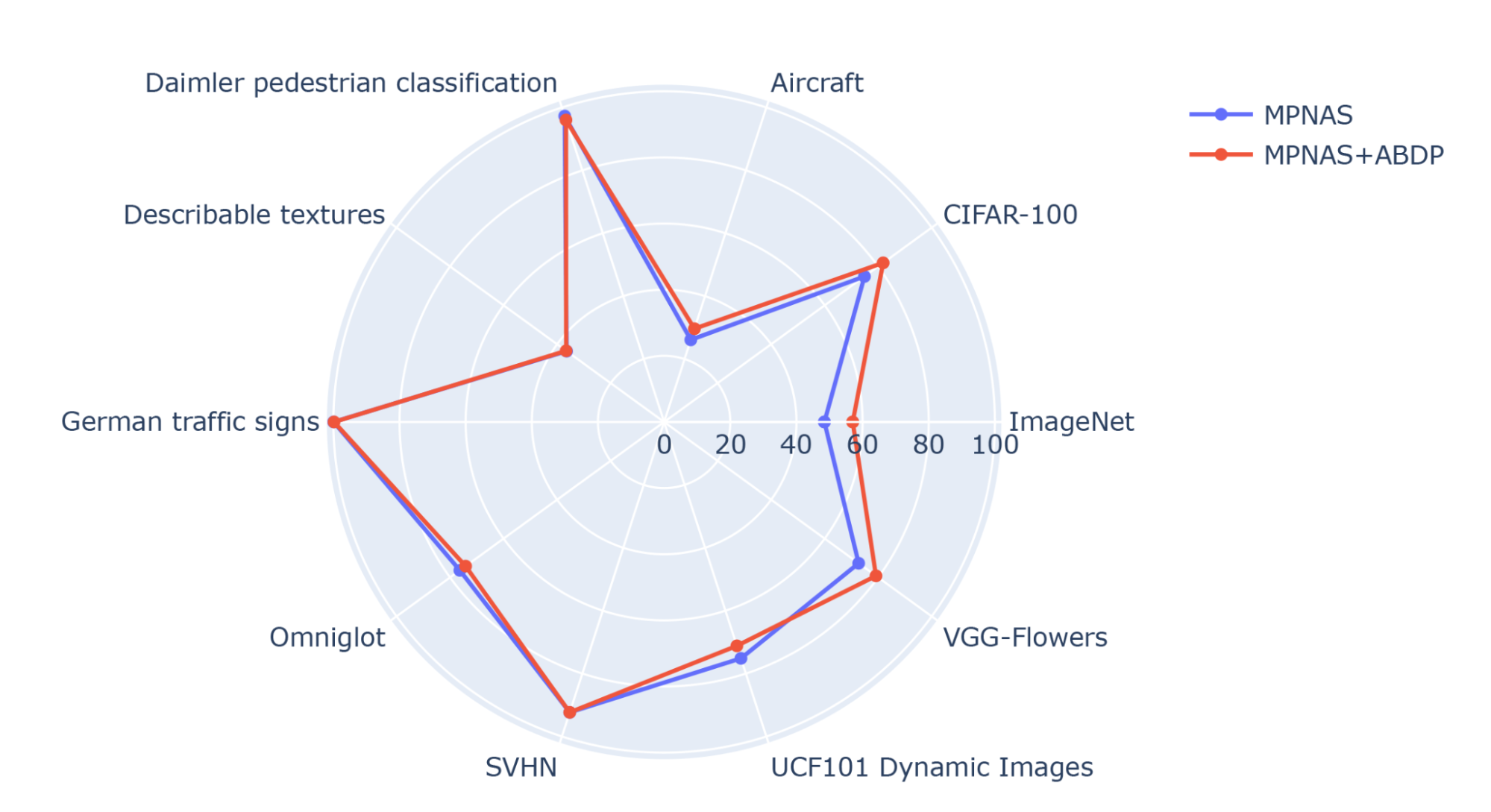

Our evaluation shows that top-1 accuracy is improved from 69.96% to 71.78% (delta: +1.81%) by using ABDP as part of the search and training stages.

|

| Top-1 accuracy for each Visual Decathlon domain trained by MPNAS with and without ABDP. |

Future Work

We find MPNAS is an efficient solution to build a heterogeneous network to address the data imbalance, domain diversity, negative transfer, domain scalability, and large search space of possible parameter sharing strategies in MDL. By using a MobileNet-like search space, the resulting model is also mobile friendly. We are continuing to extend MPNAS for multi-task learning for tasks that are not compatible with existing search algorithms and hope others might use MPNAS to build a unified multi-domain model.

Acknowledgements

This work is made possible through a collaboration spanning several teams across Google. We’d like to acknowledge contributions from Junjie Ke, Joshua Greaves, Grace Chu, Ramin Mehran, Gabriel Bender, Xuhui Jia, Brendan Jou, Yukun Zhu, Luciano Sbaiz, Alec Go, Andrew Howard, Jeff Gilbert, Peyman Milanfar, and Ming-Tsuan Yang.