A new cell-phone-sized device—which can be deployed in vast, remote areas—is using AI to identify and geolocate wildlife to help conservationists track…

A new cell-phone-sized device—which can be deployed in vast, remote areas—is using AI to identify and geolocate wildlife to help conservationists track…

A new cell-phone-sized device—which can be deployed in vast, remote areas—is using AI to identify and geolocate wildlife to help conservationists track endangered species, including wolves around Yellowstone National Park. The battery-powered devices—dubbed GrizCams—are designed by a small Montana startup, Grizzly Systems. Together with biologists, they’re deploying a constellation of the…

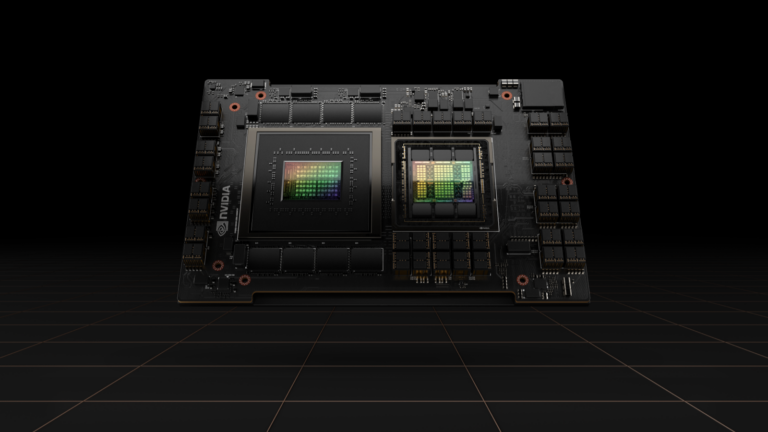

AI agents are emerging as the newest way for organizations to increase efficiency, improve productivity, and accelerate innovation. These agents are more…

AI agents are emerging as the newest way for organizations to increase efficiency, improve productivity, and accelerate innovation. These agents are more… Learn how to build scalable data processing pipelines to create high-quality datasets.

Learn how to build scalable data processing pipelines to create high-quality datasets. Deploying large language models (LLMs) in production environments often requires making hard trade-offs between enhancing user interactivity and increasing…

Deploying large language models (LLMs) in production environments often requires making hard trade-offs between enhancing user interactivity and increasing… Fraud in financial services is a massive problem. According to NASDAQ, in 2023, banks faced $442 billion in projected losses from payments, checks, and credit…

Fraud in financial services is a massive problem. According to NASDAQ, in 2023, banks faced $442 billion in projected losses from payments, checks, and credit…