Generative chemistry with AI has the potential to revolutionize how scientists approach drug discovery and development, health, and materials science and…

Generative chemistry with AI has the potential to revolutionize how scientists approach drug discovery and development, health, and materials science and…

Generative chemistry with AI has the potential to revolutionize how scientists approach drug discovery and development, health, and materials science and engineering. Instead of manually designing molecules with “chemical intuition” or screening millions of existing chemicals, researchers can train neural networks to propose novel molecular structures tailored to the desired properties.

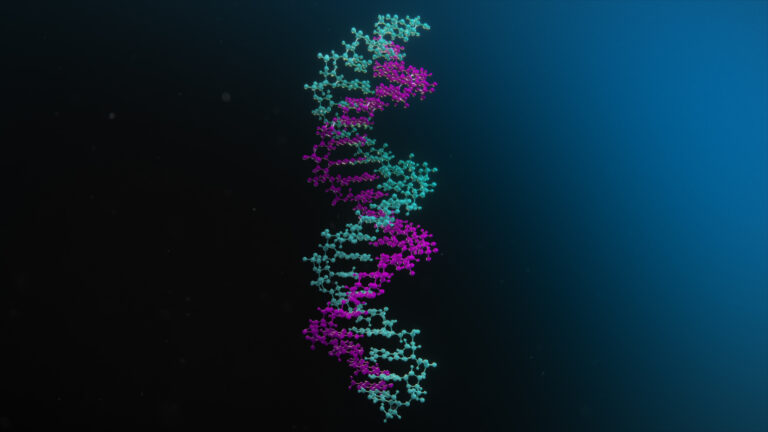

NVIDIA Parabricks is a scalable genomics analysis software suite that solves omics challenges with accelerated computing and deep learning to unlock new…

NVIDIA Parabricks is a scalable genomics analysis software suite that solves omics challenges with accelerated computing and deep learning to unlock new… As AI capabilities advance, understanding the impact of hardware and software infrastructure choices on workload performance is crucial for both technical…

As AI capabilities advance, understanding the impact of hardware and software infrastructure choices on workload performance is crucial for both technical… NVIDIA DGX Cloud Serverless Inference is an auto-scaling AI inference solution that enables application deployment with speed and reliability. Powered by NVIDIA…

NVIDIA DGX Cloud Serverless Inference is an auto-scaling AI inference solution that enables application deployment with speed and reliability. Powered by NVIDIA… The future of MedTech is robotic—hospitals will be fully automated, with AI-driven surgical systems, robotic assistants, and autonomous patient care…

The future of MedTech is robotic—hospitals will be fully automated, with AI-driven surgical systems, robotic assistants, and autonomous patient care…