AI has seen unprecedented growth — spurring the need for new training and education resources for students and industry professionals. NVIDIA’s latest on-demand webinar, Essential Training and Tips to Accelerate Your Career in AI, featured a panel discussion with industry experts on fostering career growth and learning in AI and other advanced technologies. Over 1,800

Read Article

Groundbreaking research underlines NVIDIA’s critical role in advancing quantum computing.

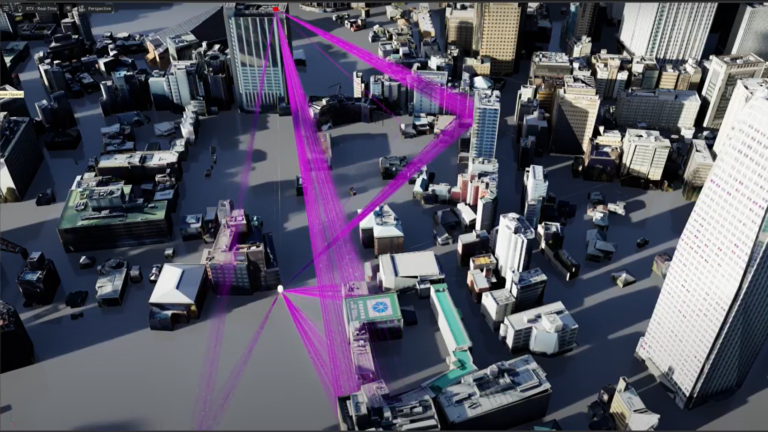

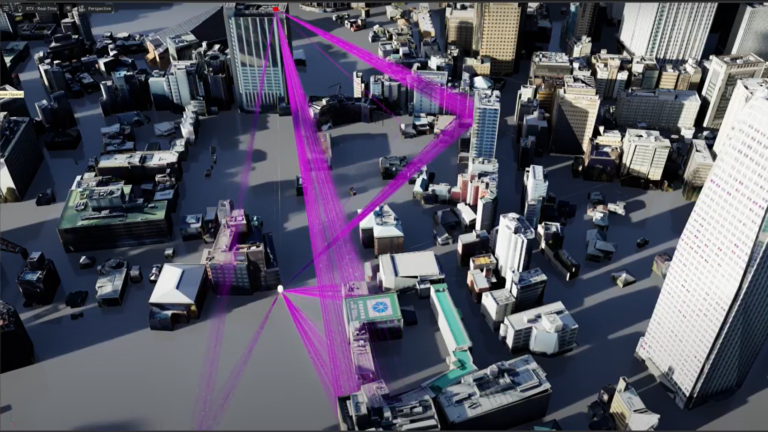

The pace of 6G research and development is picking up as the 5G era crosses the midpoint of the decade-long cellular generation time frame. In this blog post,…

The pace of 6G research and development is picking up as the 5G era crosses the midpoint of the decade-long cellular generation time frame. In this blog post,…

The pace of 6G research and development is picking up as the 5G era crosses the midpoint of the decade-long cellular generation time frame. In this blog post, we highlight how NVIDIA is playing an active role in the emerging 6G field, enabling innovation and fostering collaboration in the industry. NVIDIA is not only delivering AI native 6G tools but is also working with partners and…

With the rise of chatbots and virtual assistants, customer interactions have evolved to embrace the versatility of voice and text inputs. However, integrating…

With the rise of chatbots and virtual assistants, customer interactions have evolved to embrace the versatility of voice and text inputs. However, integrating…

With the rise of chatbots and virtual assistants, customer interactions have evolved to embrace the versatility of voice and text inputs. However, integrating visual and personalized components into these interactions is essential for creating immersive, user-centric experiences. Enter UneeQ, a leading platform known for its creation of lifelike digital humans through AI-powered technology.

Join Isaac ROS engineers and the founder of Open Navigation to explore the new Nav2 autonomous docking feature.

Join Isaac ROS engineers and the founder of Open Navigation to explore the new Nav2 autonomous docking feature.

Join Isaac ROS engineers and the founder of Open Navigation to explore the new Nav2 autonomous docking feature.

In this blog post, we continue the series on accelerating vector search using cuVS RAFT. Our previous post in the series introduced IVF-Flat, a fast algorithm…

In this blog post, we continue the series on accelerating vector search using cuVS RAFT. Our previous post in the series introduced IVF-Flat, a fast algorithm…

In this blog post, we continue the series on accelerating vector search using cuVS RAFT. Our previous post in the series introduced IVF-Flat, a fast algorithm for accelerating approximate nearest neighbors (ANN) search on GPUs. We discussed how using an inverted file index (IVF) provides an intuitive way to reduce the complexity of the nearest neighbor search by limiting it to only a small subset…

In the first part of the series, we presented an overview of the IVF-PQ algorithm and explained how it builds on top of the IVF-Flat algorithm, using the…

In the first part of the series, we presented an overview of the IVF-PQ algorithm and explained how it builds on top of the IVF-Flat algorithm, using the…

In the first part of the series, we presented an overview of the IVF-PQ algorithm and explained how it builds on top of the IVF-Flat algorithm, using the Product Quantization (PQ) technique to compress the index and support larger datasets. In this part two of the IVF-PQ post, we cover the practical aspects of tuning IVF-PQ performance. It’s worth noting again that IVF-PQ uses a lossy…

Mistral AI and NVIDIA today released a new state-of-the-art language model, Mistral NeMo 12B, that developers can easily customize and deploy for enterprise applications supporting chatbots, multilingual tasks, coding and summarization. By combining Mistral AI’s expertise in training data with NVIDIA’s optimized hardware and software ecosystem, the Mistral NeMo model offers high performance for diverse

Read Article