SyncTwin GmbH, a company that builds software to optimize production, intralogistics, and assembly, is on a mission to unlock industrial digital twins for small…

SyncTwin GmbH, a company that builds software to optimize production, intralogistics, and assembly, is on a mission to unlock industrial digital twins for small…

SyncTwin GmbH, a company that builds software to optimize production, intralogistics, and assembly, is on a mission to unlock industrial digital twins for small and medium-sized businesses (SMBs). While SyncTwin has helped major global companies like BMW minimize costs and downtime in their factories with digital twins, they are now shifting their focus to enable manufacturing businesses…

Maritime startup Orca AI is pioneering safety at sea with its AI-powered navigation system, which provides real-time video processing to help crews make…

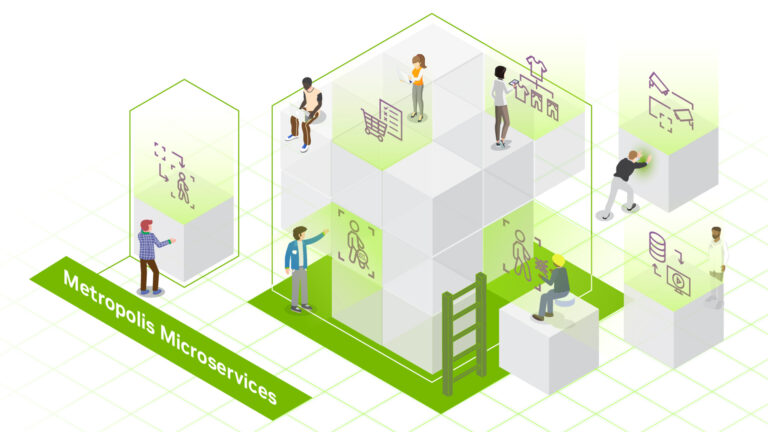

Maritime startup Orca AI is pioneering safety at sea with its AI-powered navigation system, which provides real-time video processing to help crews make… As vision AI complexity increases, streamlined deployment solutions are crucial to optimizing spaces and processes. NVIDIA accelerates development, turning…

As vision AI complexity increases, streamlined deployment solutions are crucial to optimizing spaces and processes. NVIDIA accelerates development, turning… Synthetic data in medical imaging offers numerous benefits, including the ability to augment datasets with diverse and realistic images where real data is…

Synthetic data in medical imaging offers numerous benefits, including the ability to augment datasets with diverse and realistic images where real data is… Testing out networking infrastructure and building working PoCs for a new environment can be tricky at best and downright dreadful at worst. You may run into…

Testing out networking infrastructure and building working PoCs for a new environment can be tricky at best and downright dreadful at worst. You may run into… Machine learning (ML) employs algorithms and statistical models that enable computer systems to find patterns in massive amounts of data, and then uses a model…

Machine learning (ML) employs algorithms and statistical models that enable computer systems to find patterns in massive amounts of data, and then uses a model…