Designing rich content and graphics for VR experiences means creating complex materials and high-resolution textures. But rendering all that content at VR resolutions and frame rates can be challenging, especially when rendering at the highest quality. You can address this challenge by using variable rate shading (VRS) to focus shader resources on certain parts of … Continued

Designing rich content and graphics for VR experiences means creating complex materials and high-resolution textures. But rendering all that content at VR resolutions and frame rates can be challenging, especially when rendering at the highest quality. You can address this challenge by using variable rate shading (VRS) to focus shader resources on certain parts of … Continued

Designing rich content and graphics for VR experiences means creating complex materials and high-resolution textures. But rendering all that content at VR resolutions and frame rates can be challenging, especially when rendering at the highest quality.

You can address this challenge by using variable rate shading (VRS) to focus shader resources on certain parts of an image—specifically, the parts that have the greatest impact for the scene, such as where the user is looking or the materials that need higher sampling rates.

However, because not all developers could integrate the full NVIDIA VRS API, NVIDIA developed Variable Rate Supersampling (VRSS), which increases the image quality in the center of the screen (fixed foveated rendering). VRSS is a zero-coding solution, so you do not need to add code to implement it. All the work is done through NVIDIA drivers, which makes it easy for users to experience VRSS in games and applications.

NVIDIA is now releasing VRSS 2, which now includes gaze-tracked, foveated rendering. The latest version further improves the perceived image quality by supersampling the region of the image where the user is looking. Applications that benefited from the original VRSS look even better with dynamic foveated rendering in VRSS 2.

New VR features

NVIDIA and Tobii collaborated to enhance VRSS with dynamic foveated rendering enabled by Tobii Spotlight, an eye-tracking technology specialized for foveation. This technology powers the NVIDIA driver with the latest eye-tracking information at minimal latency, which is used to control the supersampled region of the render frame. HPI’s upcoming Omnicept G2 HMD will be the first HMD on the market that takes advantage of this integration, as it uses both Tobii’s gaze-tracking technology and NVIDIA VRSS 2.

Accessing VRSS in the NVIDIA control panel

VRSS has two modes in the NVIDIA Control Panel: Adaptive and Always On.

Adaptive mode

This mode takes performance limits into consideration and tries to maximize the size of the supersampling region without hindering the VR experience. The size of the foveated region grows and shrinks in proportion to the available GPU headroom.

Figure 4. Depicting the dynamic sizing of the foveated region in Adaptive mode. The supersampled region is depicted with a green mask. Supersampled region shrinks and grows based on the GPU load (scene complexity).

Always On mode

A fixed-size foveated region is always supersampled, providing maximum image quality improvements. The size of the foveated region is adequate to cover the central portion of the user’s field of view. This mode helps users perceive the maximum IQ gains possible for a given title using VRSS. However, this may also result in frame drops for applications that are performance intensive.

Under the hood

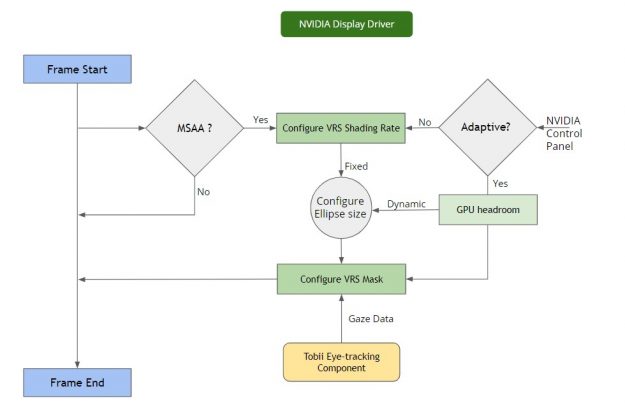

VRSS does not require any developer integration. The entire feature is implemented and handled inside the NVIDIA display driver, provided that the application is compatible and profiled by NVIDIA.

The NVIDIA display driver handles several pieces of functionality internally:

- Frame resources tracking—The driver keeps track of the resources encountered per frame and builds a heuristic model to flag a scenario where VRS could be invoked. Specifically, it notes the MSAA level to configure the VRS shading rate: the supersampling factor to be used at the center of the image. It provides render target parameters for configuring the VRS mask.

- Frame render monitoring—This involves measuring the rendering load across frames, the current frame rate of the application, the target frame rate based on the HMD refresh rate, and so on. This also computes the rendering stats required for the Adaptive mode.

- VRS enablement—As mentioned earlier, VRS gives you the ability to configure shading rate across the render surface. This is done with a shading rate mask and shading rate lookup table. For more information, see Turing Variable Rate Shading in VRWorks. The VRS infrastructure setup handles the configuration of the VRS mask and VRS shading rate table lookup. The VRS framework is configured based on the performance stats:

- VRS mask—The size of the central region mask is configured based on the available headroom.

- VRS shading rates—Configured based on the MSAA buffer sample count.

- Gaze data per frame—For VRSS 2, a component supplies the latest gaze data per frame. This involves a direct data transfer between the driver and the eye-tracking vendor platform. This data is used to configure the VRS foveation mask.

Developer guidance

For an application to take advantage of VRSS, you must submit your game or application to NVIDIA for VRSS validation. If approved, the game or application is added to an approved list within the NVIDIA driver.

Here are the benefits for a VRSS-supported game or application:

- The foveated region is now dynamic: Integration with Tobii Eye Tracking software.

- Zero coding: No developer integration required to work with game or application.

- Improved user experience: The user experiences VR content with added clarity.

- Ease of use: User controlled on/off supersampling in the NVIDIA Control Panel.

- Performance modes: Adaptive (fps priority) or Always On.

- No maintenance: Technology encapsulated at the driver level.

| Pavlov VR | L.A. Noire VR | Mercenary 2: Silicon Rising |

| Robo Recall | Eternity Warriors VR | Mercenary 2: Silicon Rising |

| Serious Sam VR: The Last Hope | Hot Dogs Horseshoes & Hand Grenades | Special Force VR: Infinity War |

| Talos Principle VR | Boneworks | Doctor Who: The Edge of time-VR |

| Battlewake | Lone Echo | VRChat |

| Job Simulator | Rec Room | PokerStars VR |

| Spiderman Homecoming VR | Rick & Morty Simulator: Virtual Rick-ality | Budget Cuts 2: Mission Insolvency |

| In Death | Skeet: VR Target Shooting | The Walking Dead: Saints & Sinners |

| Killing Floor: Incursion | SpiderMan far from home | Onward VR |

| Space Pirate Trainer | Sairento VR | Medal of Honor: Above and Beyond |

| The Soulkeeper VR | Raw Data | Sniper Elite VR |

Game and application compatibility

To make use of VRSS, applications must meet the following requirements:

- Turing architecture

- DirectX 11 VR applications

- Forward rendered with MSAA

- Eye-tracking software integrated with VRSS

Supersampling needs an MSAA buffer. The level of supersampling is based on the underlying number of samples used in the MSAA buffer. The foveated region is shaded 2x for 2x MSAA, 4x supersampled for 4x MSAA, and so on. The maximum shading rate applied for supersampling is 8x. The higher the MSAA level, the greater the supersampling effect will be.

Content suitability

Content that benefits from supersampling benefits from VRSS as well. Supersampling not only mitigates aliasing but also brings out details in an image. The degree of quality improvement varies across content.

Supersampling shines when it encounters the following types of content:

- High-resolution textures

- High frequency materials

- Textures with alpha channels – fences, foliage, menu icons, text, and so on

Conversely, supersampling does not improve IQ for:

- Flat shaded geometry

- Textures and materials with low level of detail

Come onboard!

VRSS leverages VRS technology for selective supersampling and does not require application integration. VRSS is available in NVIDIA Driver R465, and applications are required to support DX11, forward rendering, and MSAA.

Submit your game and application to NVIDIA for VRSS consideration.

If you want finer-grained control of how VRS is applied, we recommend using the explicit programming APIs of VRWorks – Variable Rate Shading (VRS). Accessing the full power of VRS, you can implement a variety of shader sampling rate optimizations, including lens-matched shading, content-adaptive shading, and gaze-tracked shading.