As computing power moves to edge computing, NVIDIA announces the EGX platform and ecosystem, including server-vendor certifications, hybrid cloud partners, and new GPU Operator software.

As computing power moves to edge computing, NVIDIA announces the EGX platform and ecosystem, including server-vendor certifications, hybrid cloud partners, and new GPU Operator software.

Demand for edge computing is growing rapidly because people increasingly need to analyze and use data where it’s created instead of trying to send it back to a data center. New applications cannot wait for the data to travel all the way to a centralized server, wait for it to be analyzed, then wait for the results to make the return trip. They need the data analyzed RIGHT HERE, RIGHT NOW!

To meet this need, NVIDIA just announced an expansion of the NVIDIA EGX platform and ecosystem, which includes server vendor certifications, hybrid cloud partners, and new GPU Operator software that enables a cloud-native deployment for GPU servers. As computing power moves to the edge, we find that smarter edge computing needs smarter networking, and so the EGX ecosystem includes NVIDIA networking solutions.

IoT drives the need for edge computing

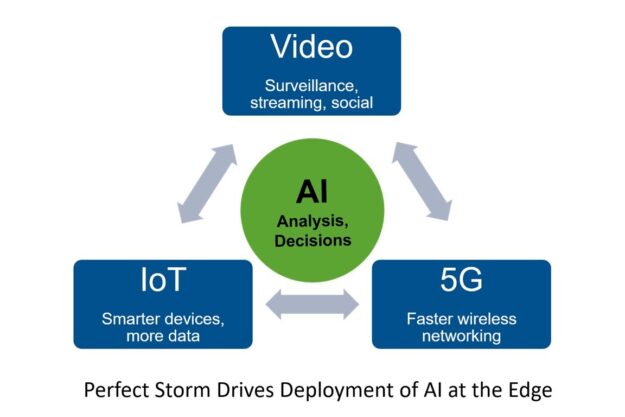

The growth of the Internet of Things (IoT), 5G wireless, and AI are all driving the move of compute to the edge. IoT means more—and smarter—devices are generating and consuming more data but in the field, far from traditional data centers. Autonomous vehicles, digital video cameras, kiosks, medical equipment, building sensors, smart cash registers, location tags, and of course phones will soon generate data from billions of end points. This data must be collected, filtered, and analyzed. Often, the distilled results are transferred to another data center or endpoint somewhere else. Sending all the data back to the data center without any edge processing not only adds much latency, it’s often too much data to transmit over WAN connections. Data centers often don’t even have enough room to store all the unfiltered, uncompressed data coming from the edge.

5G brings higher bandwidth and lower latency to the edge, enabling faster data acquisition and new applications for IoT devices. Data that previously wasn’t collected or which couldn’t be shared is now available over the air. The faster wireless connectivity enables new applications that use and respond to data at the edge, in real time. That’s instead of waiting for it to be stored centrally then analyzed later, if it’s analyzed at all.

AI means more useful information can be derived from all the new data, driving quick decisions. The flood of IoT data is too voluminous to be analyzed by humans. It requires AI technology to separate the wheat from the chaff (the signal from the noise). The decision and insights from AI then feed applications both at the edge and back in the central data center.

NVIDIA EGX delivers AI at the edge

Many edge AI workloads—such as image recognition, video processing, and robotics—require massive parallel processing power, an area where NVIDIA GPUs are unmatched. To meet the need for more advanced AI processing at the edge, NVIDIA introduced the EGX platform. The EGX platform supports a hyper-scalable range of GPU servers, from a single NVIDIA Jetson Nano system up to a full rack of NVIDIA T4 or V100 Tensor Core servers. The Jetson Nano delivers up to half a trillion operations per second (1/2 TOPS), while a full rack of T4 servers can handle ten thousand trillion operations per second (10,000 TOPS).

NVIDIA EGX also includes container-based tools, drivers, and NVIDIA CUDA-X libraries to support AI applications at the edge. EGX is supported by major server vendors and includes integration with Red Hat OpenShift to provide enterprise-class container orchestration based on Kubernetes. This is all critical because so many of the edge computing locations—retail stores, hospitals, self-driving cars, homes, factories, cell phones, and so on— are supported by enterprises, local government, and telcos, not by hyperscalers.

Recently, NVIDIA announced new EGX features and customer implementations, along with strong support for hybrid cloud solutions. The NGC-Ready server certification program has been expanded to include tests for edge security and remote management, and the new NVIDIA GPU Operator simplifies management and operation of AI across widely distributed edge devices.

Smarter edge needs smarter networking

But there is another class of technology and partners needed to make EGX—and AI at the edge—as smart and efficient as it can be: networking. As the amount of GPU-processing power at the edge and the number of containers increases, the amount of network traffic can also increase exponentially.

Before AI, the analyzable edge data traffic, not counting streamed graphics, videos and music going out to phones, probably flowed 95% inbound. For example, data might flow from cameras to digital video recorders, from cars to servers, or from retail stores to a central data center. Any analysis or insight would often be human-driven, as people can only concentrate and observe a single stream of video. The data might be stored for a later date, removing the ability to make instant decisions.

Now, with AI solutions like EGX deployed at the edge, applications must talk with IoT devices, back to servers in the data center, and with each other. AI applications trade data and results with standard CPUs, data from the edge is synthesized with data from the corporate data center or public cloud, and the results get pushed back to the kiosks, cars, appliances, MRI scanners, and phones.

The result is a massive amount of N-way data traffic between containers, IoT devices, GPU servers, the cloud, and traditional centralized servers. Software-defined networking (SDN) and network virtualization play a larger role. This expanded networking brings new security concerns, as the potential attack surface for hackers and malware is much larger than before and cannot be contained inside a firewall.

As networking becomes more complex, the network must become smarter in many ways. Some examples of this are:

- Packets must be routed efficiently between containers, VMs, and bare metal servers.

- Network function virtualization (NFV) and SDN demand accelerated packet switching, which could be in user space or kernel space.

- The use of RDMA requires hardware offloads on the NICs and intelligent traffic management on the switches.

- Security requires that data be encrypted at rest or in flight, or both. Whatever is encrypted must also be decrypted at some point.

- The growth in IoT data combined with the switch from spinning disk to flash call for compression and deduplication of data to control storage costs.

These increased network complexity and security concerns impose a growing burden on the edge servers as well as on the corporate and cloud servers that interface with them. With more AI power and faster network speeds, handling the network virtualization, SDN rules, and security filtering sucks up an expensive share of CPU cycles, unless you have the right kind of smart network. As the network connections get faster, that network’s smarts must be accelerated in hardware instead of running in software.

SmartNICs save edge compute cycles

Smarter edge computing requires smarter networking. If this networking is handled by the CPUs or GPUs, then valuable cycles are consumed by moving the data instead of analyzing and transforming it. Someone must encode and decode overlay network headers, determine which packet goes to which container, and ensure that SDN rules are followed. Software-defined firewalls and routers impose additional CPU burdens as packets must be filtered based on source, destination, headers, or even on the internal content of the packets. Then, the packets are forwarded, mirrored, rerouted, or even dropped, depending on the network rules.

Fortunately, there is a class of affordable SmartNICs, such as the NVIDIA ConnectX family, which offload all this work from the CPU. These adapters have hardware-accelerated functions to handle overlay networks, Remote Direct Memory Access, container networking, virtual switching, storage networking, and video streaming. They also accelerate the adapter side of network congestion management and QoS.

The newest adapters, such as the ConnectX-6 Dx, can perform in-line encryption and decryption in hardware at high speeds, supporting IPsec and TLS. With these important but repetitive network tasks safely accelerated by the NIC, the CPUs and GPUs at the edge connect quickly and efficiently with each other and the IoT, all the while focusing their core cycles on what they do best—running applications and parallelized processing of complex data.

BlueField DPU adds extra protection against malware and overwork

An even more advanced network option for edge compute efficiency is a DPU or data processing unit, such as the NVIDIA BlueField DPU. A DPU combines all the high-speed networking and offloads of a SmartNIC with programmable cores that can handle additional functions around networking, storage, or security.

- It can offload both SDN data plane and control plane functions.

- It can virtualize flash storage for CPUs or GPUs.

- It can implement security in a separate domain to provide very high levels of protection against malware.

On the security side, DPUs such as BlueField provide security domain isolation. Without isolation, any security software is running in the same domain as the OS, container management, and application. If an attacker compromises any of those, the security software is at risk of being bypassed, removed, or corrupted. With BlueField, the security software continues running on the DPU where it can continue to detect, isolate, and report malware or breaches on the server. By running in a separate domain—protected by a hardware root of trust—the security features can sound the alarm to intrusion detection and prevention mechanisms and also prevent malware on the infected server from spreading.

The newest BlueField-2 DPU also adds regular expression (RegEx) matching that can quickly detect patterns in network traffic or server memory, so it can be used for threat identification. It also adds hardware offloads for data efficiency using deduplication through a SHA-2 hash and compression/decompression.

Smarter networking at the edge

With the increasing use of AI solutions at the edge, like the NVIDIA EGX platform, the edge becomes infinitely smarter. However, networking and security also get more complex and threaten to slow down servers, just when the growth of the IoT and 5G wireless requires more compute power. This can be solved with the deployment of SmartNICs and DPUs, such as the ConnectX and BlueField product families. These network solutions offload important network and security tasks, such as SDN, network virtualization, and software-defined firewall functions. This allows AI at the edge to run more efficiently and securely.

For more information, see the NVIDIA EGX Platform for Edge Computing webinar.