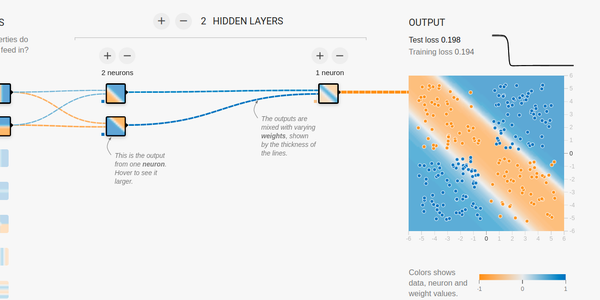

Hi guys, I’m trying to reproduce this mlp in tf (constraining it to have only one hidden layer with 2 units). However, like in playground tf, many times do not converge to global maxima. I think is due to weight initialization, tried xavier and he initializations but no success. The following piece of code is the model in tf keras.

model = tf.keras.models.Sequential([ Dense(units=2, activation='sigmoid', input_shape=(2,)), Dense(units=1, activation='sigmoid')

])

Any help, would be appreciated. Thanks.

submitted by /u/Idea_Cultural

[visit reddit] [comments]