I have used this official tutorial to train my custom traffic sign detector with the dataset from German Traffic Sign Detection Benchmark site.

I have created my PASCAL VOC format .xml files using pascal-voc-writer python lib and converted them to tf records with the resized images to 320×320. I have also scaled the bounding box coordinates as they were for the 1360×800 images. I have used the formula Rx = NEW_WIDTH/WIDTH Ry = NEW_HEIGHT/HEIGHT where NEW_WIDTH = NEW_HEIGHT = 320 and rescaled coords like so xMin = round(Rx * int(xMin)).

The pre-trained model I have used is ssd_mobilenet_v2_fpnlite_320x320_coco17_tpu-8. You can also see the images used for training here and their corresponding .xml files here.

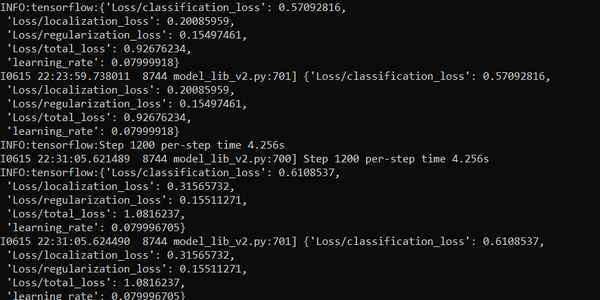

The problem is that after training and converting from saved_model to .tflite using this script, it does not recognize traffic signs and the outputs are a bit different of what I expect as instead of a list of list of normalized coordinates I get a list of a list of list of normalized coordinates. The last steps in the training process look like this. After using this script, the output image with predicted bounding boxes looks like this and the printed output is this.

What could be the problem? Thank you!

submitted by /u/morphinnas

[visit reddit] [comments]