NVIDIA announced new pre-trained models and general availability of Transfer Learning Toolkit (TLT) 3.0, a core component of NVIDIA’s Train, Adapt and Optimize (TAO) platform guided workflow for creating AI.

NVIDIA announced new pre-trained models and general availability of Transfer Learning Toolkit (TLT) 3.0, a core component of NVIDIA’s Train, Adapt and Optimize (TAO) platform guided workflow for creating AI.

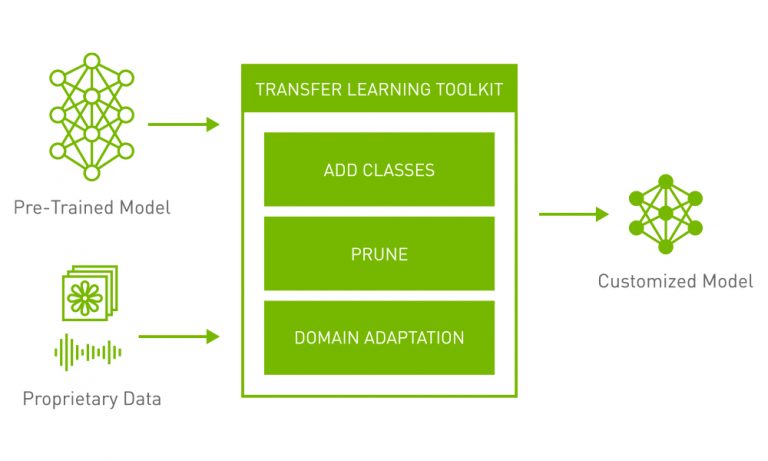

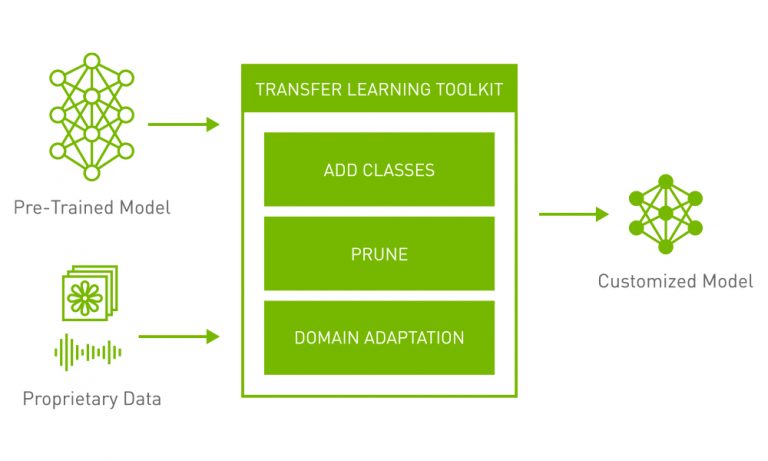

Today, NVIDIA announced new pretrained models and general availability of Transfer Learning Toolkit (TLT) 3.0, a core component of NVIDIA’s Train, Adapt, and Optimize (TAO) platform guided workflow for creating AI. The new release includes a variety of highly accurate and performant pretrained models in computer vision and conversational AI, as well as a set of powerful productivity features that boost AI development by up to 10x.

As enterprises race to bring AI-enabled solutions to market, your competitiveness relies on access to the best development tools. The development journey to deploy custom, high-accuracy, and performant AI models in production can be treacherous for many engineering and research teams attempting to train with open-source models for AI product creation. NVIDIA offers high-quality, pretrained models and TLT to help reduce costs with large-scale data collection and labeling. It also eliminates the burden of training AI/ML models from scratch. New entrants to the computer vision and speech-enabled service market can now deploy production-class AI without a massive AI development team.

Highlights of the new release include:

- A pose-estimation model that supports real-time inference on edge with 9x faster inference performance than the OpenPose model.

- PeopleSemSegNet, a semantic segmentation network for people detection.

- A variety of computer vision pretrained models in various industry use cases, such as license plate detection and recognition, heart rate monitoring, emotion recognition, facial landmarks, and more.

- CitriNet, a new speech-recognition model that is trained on various proprietary domain-specific and open-source datasets.

- A new Megatron Uncased model for Question Answering, plus many other pretrained models that support speech-to-text, named-entity recognition, punctuation, and text classification.

- Training support on AWS, GCP, and Azure.

- Out-of-the-box deployment on NVIDIA Triton and DeepStream SDK for vision AI, and NVIDIA Jarvis for conversational AI.

Get Started Fast

- Download Transfer Learning Toolkit and access to developer resources: Get started.

- Download models from NGC: Computer vision | Conversational AI

- Check out the latest developer tutorial: Training and Optimizing a 2D Pose-Estimation Model with the NVIDIA Transfer Learning Toolkit. Part 1 | Part 2

Integration with Data-Generation and Labeling Tools for Faster and More Accurate AI

TLT 3.0 is also now integrated with platforms from several leading partners who provide large, diverse, and high-quality labeled data—enabling faster end-to-end AI/ML workflows. You can now use these partners’ services to generate and annotate data, seamlessly integrate with TLT for model training and optimization, and deploy the model using DeepStream SDK or Jarvis to create reliable applications in computer vision and conversational AI.

Check out more partner blog post and tutorials about synthetic data and data annotation with TLT:

- AI Reverie: Preparing Models for Object Detection With Real and Synthetic Data and the NVIDIA TLT

- SKY ENGINE: Accelerate model development and AI training with synthetic data using SKY ENGINE AI platform and NVIDIA Transfer Learning Toolkit

- Hasty AI: How to get AI production-ready with Hasty.ai and NVIDIA TLT

- CVEDIA: Startup’s AI Intersects with U.S. Traffic Lights for Better Flow, Safety

- Explainer blog post: What Is Synthetic Data

Learn more about NVIDIA pretrained models and Transfer Learning Toolkit > >