NVIDIA announces new initiatives to deliver a suite of perception technologies to the ROS developer community.

NVIDIA announces new initiatives to deliver a suite of perception technologies to the ROS developer community.

All things that move will become autonomous. And all things autonomous will require advanced real-time perception.

NVIDIA announced its latest initiatives to deliver a suite of perception technologies to the ROS developer community. These initiatives will reduce development time and improve performance for developers seeking to incorporate cutting-edge computer vision and AI/ML functionality into their ROS-based robotics applications.

Open Robotics to Extend ROS for NVIDIA AI

NVIDIA and Open Robotics have entered into an agreement to accelerate ROS 2 performance on NVIDIA’s Jetson edge AI platform and GPU-based systems and to enable seamless simulation interoperability between Open Robotics’s Ignition Gazebo and NVIDIA Isaac Sim on Omniverse.

The Jetson platform is widely adopted by roboticists across a spectrum of applications. It is designed to enable high-performance, low latency processing for robots to be responsive, safe, and collaborative. Open Robotics will enhance ROS 2 to enable efficient management of data flow and shared memory across GPU and other processors present on Jetson. This will significantly improve the performance of applications that have to process high bandwidth data from sensors such as cameras and lidars in real-time.

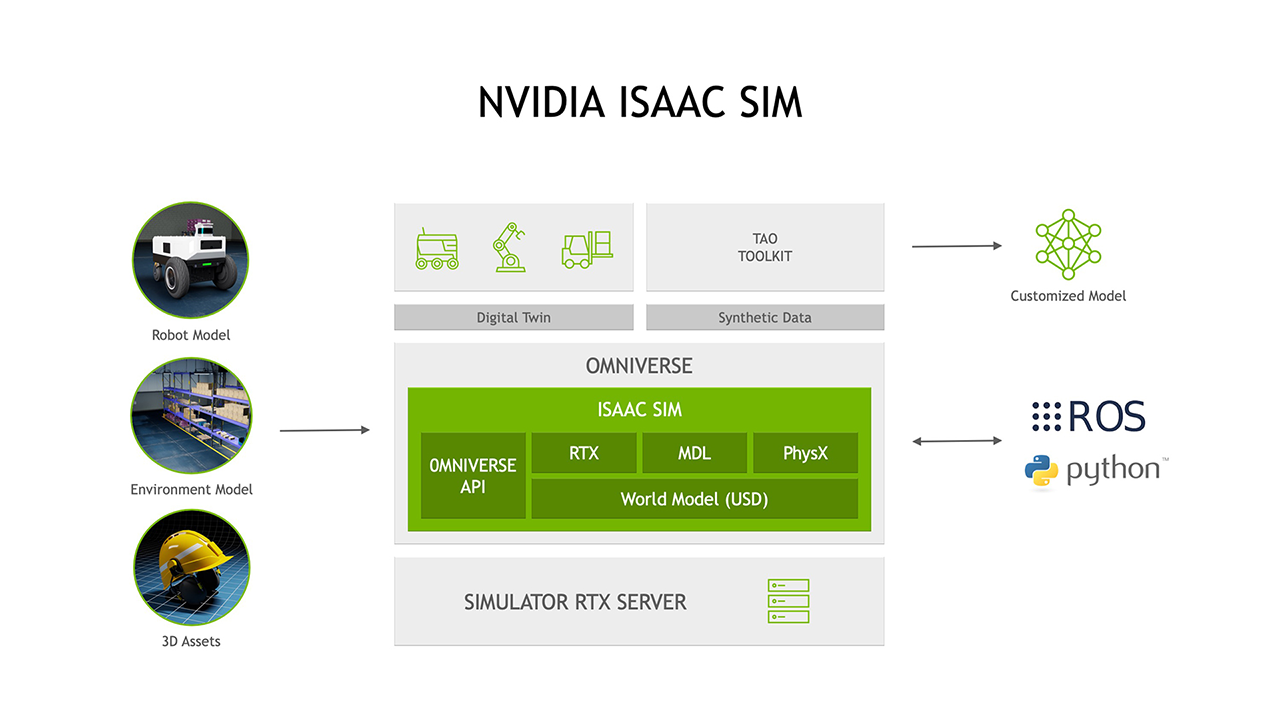

In addition to the enhancements for deployment of robot applications on Jetson, Open Robotics and NVIDIA are working on plans to integrate Ignition Gazebo and NVIDIA Isaac Sim. NVIDIA Isaac Sim already supports ROS 1 & 2 out of the box and features an all-important ecosystem of 3D content with its connection to popular applications, e.g., Blender and Unreal Engine 4.

Ignition Gazebo brings a decades-long track record of widespread use throughout the robotics community, including in high-profile competition events such as the ongoing DARPA Subterranean Challenge. With the two simulators connected, ROS developers can easily move their robots and environments between Ignition and Isaac Sim to run large scale simulations and take advantage of each simulator’s advanced features, such as high-fidelity dynamics, accurate sensor models, and photorealistic rendering to generate synthetic data for training and testing of AI models.

“As more ROS developers leverage hardware platforms that contain additional compute capabilities designed to offload the host CPU, ROS is evolving to make it easier to efficiently take advantage of these advanced hardware resources,” said Brian Gerkey, CEO of Open Robotics. “Working with an accelerated computing leader like NVIDIA and its vast experience in AI and robotics innovation will bring significant benefits to the entire ROS community.”

Software resulting from this collaboration is expected to be released in the spring of 2022.

Isaac GEMs Released for ROS with Significant Speedup

Isaac GEMs for ROS are hardware accelerated packages that make it easier for ROS developers to build high-performance solutions on the Jetson platform. The focus of these GEMs is on improving throughput on image processing and on DNN-based perception models that are of growing importance to roboticists. These packages reduce the load on the host CPU while providing significant performance gain.

The new Isaac GEMs for ROS include:

- SGM Stereo Disparity and Point Cloud

- Color Space Conversion and Lens Distortion Correction

- AprilTags Detection

New Isaac Sim Features Enable ROS Developers

The latest release of Isaac Sim includes significant support for the ROS developer community. Some of the more compelling examples of this are the ROS2 Navigation stack and the MoveIt Motion Planning Framework. These examples are available today and can be found in the Isaac Sim documentation.

List of ROS Examples in Isaac Sim

- ROS April Tag

- ROS Stereo Camera

- ROS Navigation

- ROS TurtleBot3 Sample

- ROS Manipulation and Camera Sample

- ROS Services

- MoveIt Motion Planning Framework

- Native Python ROS Usage

- ROS2 Navigation

Isaac Sim Generates Synthetic Datasets for Training Perception

In addition to being a robotic simulator, Isaac Sim has a powerful set of capabilities to generate synthetic data to train and test perception models. These capabilities will be more important as roboticists incorporate more perception features into their platforms. It’s clear that the better that a robot can perceive its environment the more autonomous it can be, thereby requiring less human intervention.

Once Isaac Sim generates synthetic datasets, they can be fed directly into NVIDIA TAO, an AI model adaptation platform, to adapt perception models for a robot’s specific working environment. The task of ensuring that a robot’s perception stack is going to perform in a given working environment can be started well before any real-data is ever collected from the target surroundings.

Roboticists have long faced challenges in connecting and integrating the classic robotic tasks like navigation to AI-based perception stacks. Isaac Sim addresses this workflow challenge by being a robotics and synthetic data generation tool simultaneously, with streamlined TAO training platform integration.

More to Come at ROS World and GTC 2021

NVIDIA is gearing up for ROS World on Oct 21-22, 2021. We are planning to release more new GEMs for Jetson developers including several popular DNNs. We will also announce features in Isaac Sim to support the ROS developer community. Be sure to stop by our virtual booth, attend our NVIDIA ROS roundtable, watch the technical presentation on Isaac Sim, and more.

NVIDIA has a great lineup of speakers, talks, and content at the upcoming GTC scheduled for Nov. 8-11. We have a track for robotics developers including a presentation by Brian Gerkey, CEO and cofounder of Open Robotics. Additionally, we have talks covering NVIDIA Jetson, Isaac ROS, Isaac Sim, Isaac GYM and more.

Getting Started Today

The following resources are available for developers interested in getting started today on adding NVIDIA AI Perception to their products.

Isaac GEMs for ROS >>

Isaac Sim information >>

Tutorials on Synthetic Data Generation with Isaac Sim >>

Accelerating ML Training with TAO toolkit information >>