Learn how developers used pretrained machine learning models and the Jetson Nano 2GB to create Mariola, a robot that can mimic human actions, from arm and head movements to making faces.

Learn how developers used pretrained machine learning models and the Jetson Nano 2GB to create Mariola, a robot that can mimic human actions, from arm and head movements to making faces.

They say “imitation is the sincerest form of flattery.” Well, in the case of a robotics project by Polish-based developer Tomasz Tomanek, imitation—or mimicry—is the goal of his robot named Mariola.

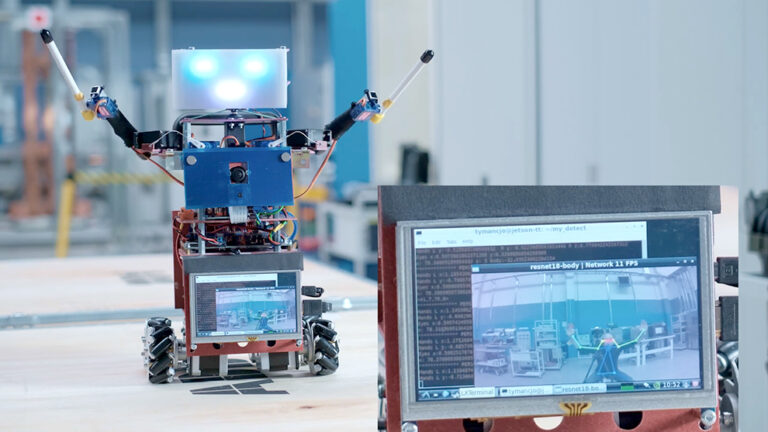

In this latest Jetson Project of the Month, Tomanek has developed a funky little robot using pretrained machine learning models to make human-robot interactions come to life. The main controller for this robot is the Jetson Nano 2GB.

The use of PoseNet models make it possible for Mariola to recognize the posture and movements of a person, and then use those models to make the robot mimic or replicate those human actions. As Tomanek notes, “the use of the Jetson Nano makes it quite simple and straightforward to achieve this goal.”

An overview about Mariola is available in this YouTube video from the developer:

As you can see, Mariola is able to drive on wheels, move its arms, turn its head, and make faces. Separate Arduino controllers embedded in each section of the robot’s body enable those actions. Separate controllers for servo motors control the movement of the arms and head. The robot has four mecanum wheels so that it can move omnidirectionally.

Mariola’s facial expressions use a separate microcontroller built from NeoPixel LEDs, a set of two for each eye and a set of eight for the mouth. Daisy-chained together, they are driven by a separate Arduino nano board that manages color changes and the appearance of blinking eyes.

According to Tomanek, one key idea of the Mariola build was to make each subsystem a separate unit and let them communicate through an internal bus. There is a UART/BT receiver Arduino nano, and its role is to get the command from the user and decode to which subcontroller it needs to go and send it through CAN BUS.

Each subcontroller gets its commands from CAN BUS and creates the corresponding action for the wheels, the servos (hands and head moves), or the face (NeoPixels).

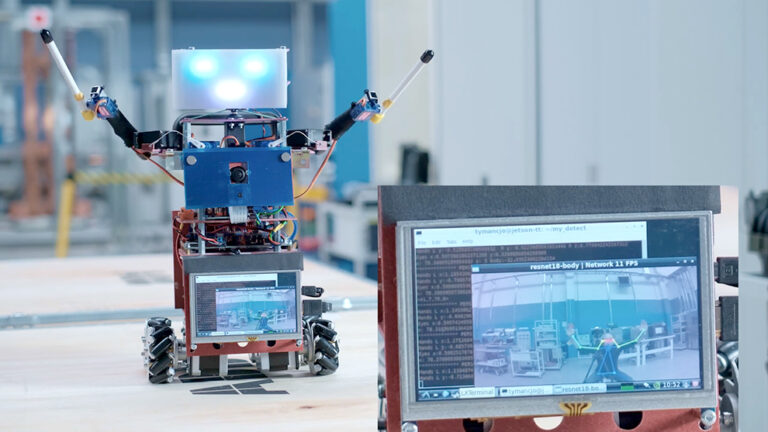

Tomanek notes in the NVIDIA Developer Forum that the Jetson Nano at the back of the robot is the brain running a customized Python script with the resnet18-body that returns the planar coordinates of a person’s joints when it detects them. Those coordinates are recalculated through an IK model to get the servo’s positions, and the results are sent to the master Arduino through UART. The Arduinos do the rest of the movement.

Currently, Mariola will detect and then mimic the movement of one person at a time. If no one is visible to the robot, or if more than one person is detected, no action occurs.

Why did Tomanek choose a Jetson Nano for this project? As he notes, “the potential power of the pretrained model available for the Jetson, along with the affordability [of the Jetson Nano], brought me to use the 2GB version to learn and see how it works.”

“This is a work in progress and learning project for me,” Tomanek notes. While there is no stated goal to Mariola, he sees it as an opportunity to experiment and learn what can be achieved by using this technology. “The best outcome so far is that with those behaviors driven by the machine learning model, there is a certain kind of autonomy for this small robot.”

When people interact with Mariola for the first time, Tomanek says “it always generates smiles. This is a very interesting aspect of human-robot interactions.” It’s easy to see why that would happen. Just watch Mariola in action–we dare you not to smile:

The Mariola project continues in active development, and is modified and updated on a regular basis. As Tomanek concludes in his overview video, “We’ll see what the future will bring.”

More details about the project are available in this GitHub repository.