The future of healthcare is software-defined and AI-enabled. Around 700 FDA-cleared, AI-enabled medical devices are now on the market — more than 10x the number available in 2020. Many of the innovators behind this boom announced their latest AI-powered solutions at NVIDIA GTC, a global conference that last week attracted more than 16,000 business leaders,

Read Article

A manufacturing plant near Hsinchu, Taiwan’s Silicon Valley, is among facilities worldwide boosting energy efficiency with AI-enabled digital twins. A virtual model can help streamline operations, maximizing throughput for its physical counterpart, say engineers at Wistron, a global designer and manufacturer of computers and electronics systems. In the first of several use cases, the company

Read Article

Editor’s note: This post is part of Into the Omniverse, a series focused on how artists, developers and enterprises can transform their workflows using the latest advances in OpenUSD and NVIDIA Omniverse. The Universal Scene Description framework, aka OpenUSD, has emerged as a game-changer for building virtual worlds and accelerating creative workflows. It can ease

Read Article

Generative AI, in the data center and in the car, is making vehicle experiences safer and more enjoyable. The latest advancements in automotive technology were on display last week at NVIDIA GTC, a global AI conference that drew tens of thousands of business leaders, developers and researchers from around the world. The event kicked off

Read Article

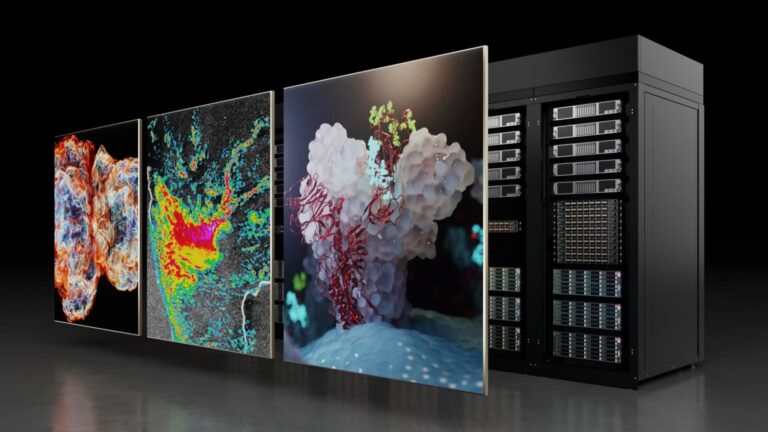

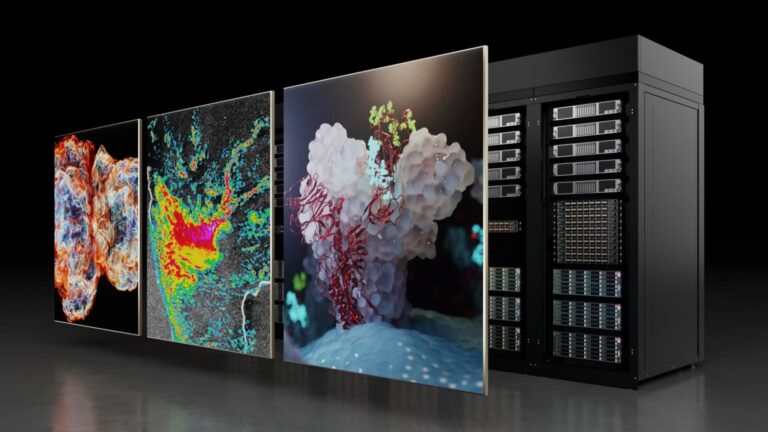

New Architecture: NVIDIA Blackwell

Learn how the NVIDIA Blackwell GPU architecture is revolutionizing AI and accelerated computing.

Learn how the NVIDIA Blackwell GPU architecture is revolutionizing AI and accelerated computing.

Learn how the NVIDIA Blackwell GPU architecture is revolutionizing AI and accelerated computing.

AI is augmenting high-performance computing (HPC) with novel approaches to data processing, simulation, and modeling. Because of the computational requirements…

AI is augmenting high-performance computing (HPC) with novel approaches to data processing, simulation, and modeling. Because of the computational requirements…

AI is augmenting high-performance computing (HPC) with novel approaches to data processing, simulation, and modeling. Because of the computational requirements of these new AI workloads, HPC is scaling up at a rapid pace. To enable applications to scale to multi-GPU and multi-node platforms, HPC tools and libraries must support that growth. NVIDIA provides a comprehensive ecosystem of…

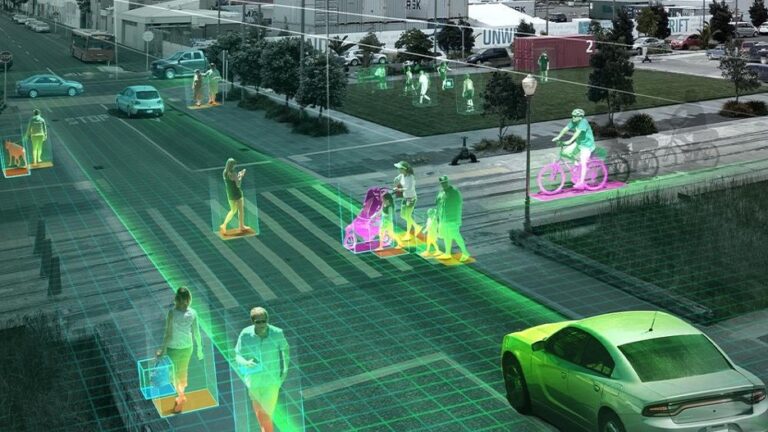

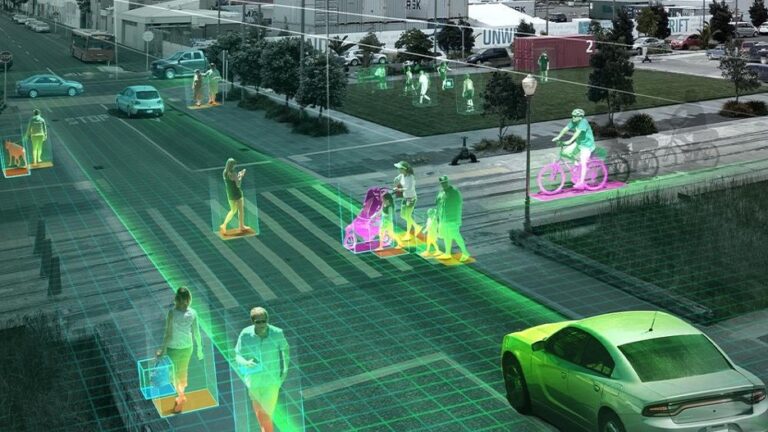

Explainer: What Is Computer Vision?

Computer vision defines the field that enables devices to acquire, process, understand, and analyze digital images and videos and extract useful…

Computer vision defines the field that enables devices to acquire, process, understand, and analyze digital images and videos and extract useful…

Computer vision defines the field that enables devices to acquire, process, understand, and analyze digital images and videos and extract useful information.

Imagine a world where you can whisper your digital wishes into your device, and poof, it happens. That world may be coming sooner than you think. But if you’re worried about AI doing your thinking for you, you might be waiting for a while. In a fireside chat Wednesday at NVIDIA GTC, the global AI

Read Article

Speech and translation AI models developed at NVIDIA are pushing the boundaries of performance and innovation. The NVIDIA Parakeet automatic speech recognition…

Speech and translation AI models developed at NVIDIA are pushing the boundaries of performance and innovation. The NVIDIA Parakeet automatic speech recognition…

Speech and translation AI models developed at NVIDIA are pushing the boundaries of performance and innovation. The NVIDIA Parakeet automatic speech recognition (ASR) family of models and the NVIDIA Canary multilingual, multitask ASR and translation model currently top the Hugging Face Open ASR Leaderboard. In addition, a multilingual P-Flow-based text-to-speech (TTS) model won the LIMMITS ’24…

At GDC 2024, NVIDIA announced that leading AI application developers such as Inworld AI are using NVIDIA digital human technologies to accelerate the deployment…

At GDC 2024, NVIDIA announced that leading AI application developers such as Inworld AI are using NVIDIA digital human technologies to accelerate the deployment…

At GDC 2024, NVIDIA announced that leading AI application developers such as Inworld AI are using NVIDIA digital human technologies to accelerate the deployment of generative AI-powered game characters alongside updated NVIDIA RTX SDKs that simplify the creation of beautiful worlds. You can incorporate the full suite of NVIDIA digital human technologies or individual microservices into…