|

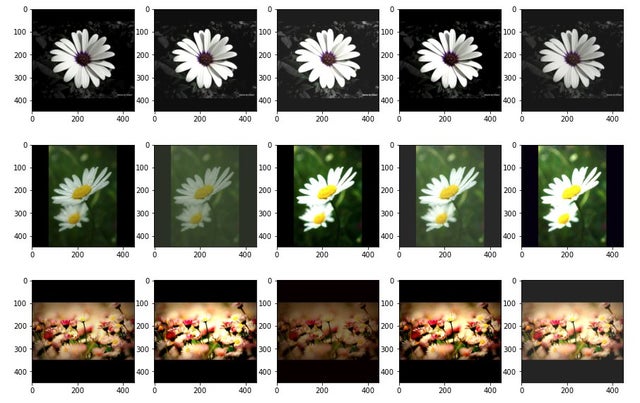

Hi there, I am a couple of weeks in with learning ML, and trying to get a decent image classifier. I have 60 or so labels, and only about 175-300 images each. I found that augmentation via flips and rotations suits the data and has helped bump up the accuracy a bit (maybe 7-10%). The images have mostly white backgrounds, but some are not (greys, some darker) and this is not evenly distributed I think it was causing issues when making predictions from test photos: some incorrect labels came up frequently despite little visual similarity. I thought perhaps the background was involved as the darker background/shadows matched my photos. I figured adding contrast/brightness variation would nullify this behavior so I followed this here which adds a layer to randomize contrast and brightness to images in the training dataset. Snippet below: #defaults contrast_range=[0.5, 1.5], brightness_delta=[-0.2, 0.2] contrast = np.random.uniform( self.contrast_range[0], self.contrast_range[1]) brightness = np.random.uniform( self.brightness_delta[0], self.brightness_delta[1]) images = tf.image.adjust_contrast(images, contrast) images = tf.image.adjust_brightness(images, brightness) images = tf.clip_by_value(images, 0, 1) return images With slight adjustments to contrast and brightness. I reviewed the output and it looks exactly how I wanted it, and I figured it would at least help, but it appears to cause a trainwreck! Does this make sense? Where should I look to improve on this? As well, most tutorials focus on two labels, for 60 or so labels with 200-300 images each, in projects that deal with plants/nature/geology for example what is typically attainable for accuracy? submitted by /u/m1g33 |

Categories

Added data augmentation through contrast and brightness with poor results, why?