Learning about new technologies can sometimes be intimidating. The NVIDIA edge computing webinar series aims to present the basics of edge computing so that all…

Learning about new technologies can sometimes be intimidating. The NVIDIA edge computing webinar series aims to present the basics of edge computing so that all…

Learning about new technologies can sometimes be intimidating. The NVIDIA edge computing webinar series aims to present the basics of edge computing so that all attendees can understand the key concepts associated with this technology.

NVIDIA recently hosted the webinar, Edge Computing 201: How to Build an Edge Solution, which explores the components needed to build a production edge AI deployment. During the presentation, attendees were asked various polling questions about their knowledge of edge computing, their biggest challenges, and their approaches to building solutions.

You can see a breakdown of the results from those polling questions below, along with some answers and key resources that will help you along in your edge journey.

What stage are you in on your edge computing journey?

More than half (55%) of the poll respondents said they are still in the learning phase of their edge computing journey. The first two webinars in the series—Edge Computing 101: An Introduction to the Edge and Edge Computing 201: How to Build an Edge Solution—are specifically designed for those who are just starting to learn about edge computing and edge AI.

Another resource for learning the basics of edge computing is Top Considerations for Deploying AI at the Edge. This reference guide includes all the technologies and decision points that need to be considered when building any edge computing deployment.

In addition, 25% of respondents report that they are researching what the right edge computing use cases are for them. By far, the most mature edge computing use case today is computer vision, or vision AI. Since computer vision use cases require high bandwidth and low latency, they are the ideal use case for what edge computing has to offer.

Let’s Build Smarter, Safer Spaces with AI provides a deep dive into computer vision use cases, and walks you through many of the technologies associated with making these use cases successful.

What is your biggest challenge when designing an edge computing solution?

Respondents were more evenly split across several different answers for this polling question. In each subsection below, you can read more about the challenges audience members reported experiencing while getting started with edge computing, along with some resources that can help.

Unsure what components are needed

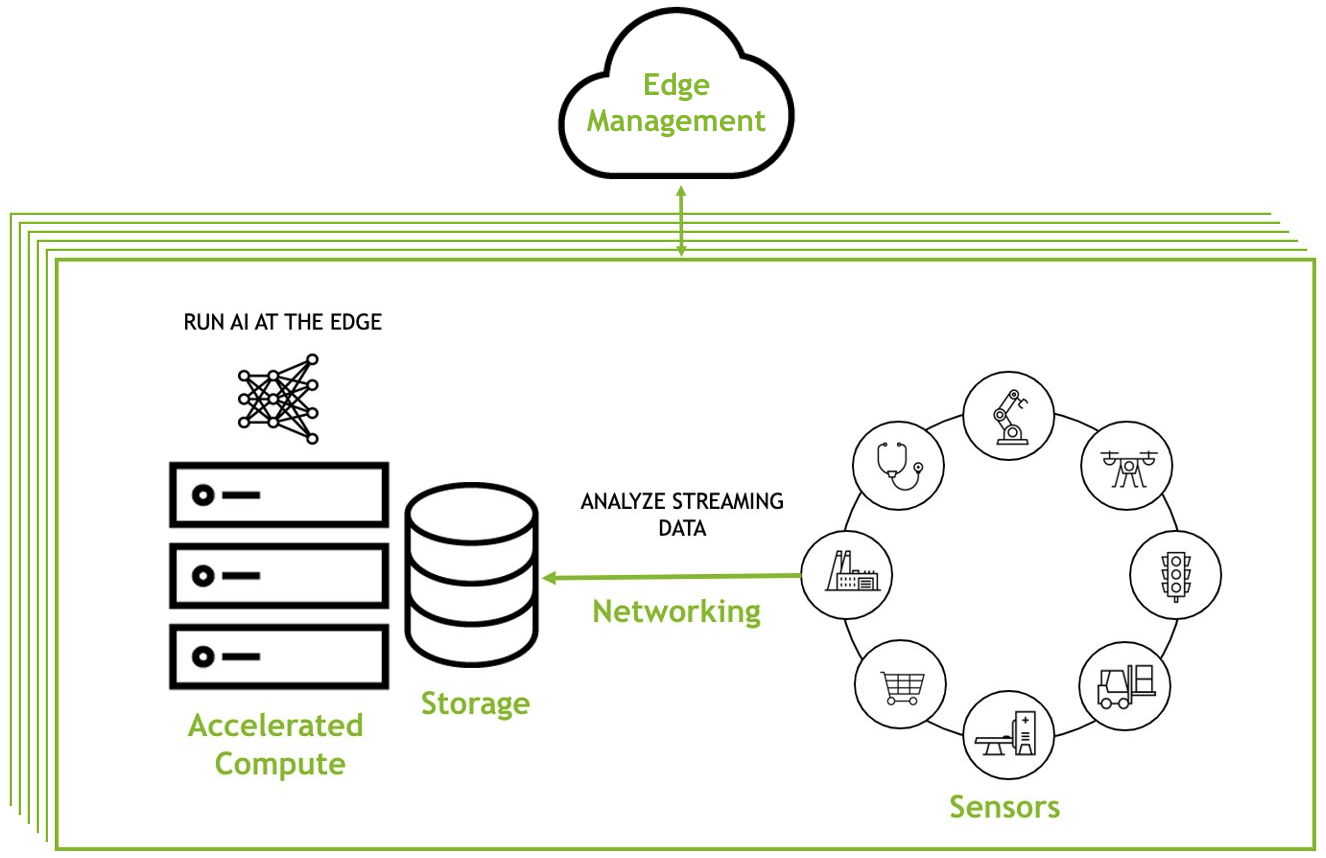

Although each use case and environment will have unique, specific requirements, almost every edge deployment will include three components:

- An application that can be deployed and managed across multiple environments

- Infrastructure that provides the right compute and networking to enable the desired use case

- Security tools that will protect intellectual property and critical data

Of course, there will be additional considerations, but focusing on these three components provides organizations with what they need to get started with AI at the edge.

Here’s what a typical edge deployment looks like:

To learn more, see Edge Computing 201: How to Build an Edge Solution. It covers all the considerations for building an edge solution, the needed components, and how to ensure those components work together to create a seamless workflow.

Implementation challenges

Understanding the steps involved in implementing an edge computing solution is a good way to ensure that the first solution built is a comprehensive solution, which will help eliminate future headaches when maintaining or scaling.

This understanding will also help to eliminate unforeseen challenges. The five main steps to implementing any edge AI solution are:

- Identify a use case or challenge to be solved

- Determine what data and application requirements exist

- Evaluate existing edge infrastructure and what pieces must be added

- Test the solution and then roll it out at scale

- Share success with other groups to promote additional use cases

To learn more about how to implement an edge computing solution, see Steps to Get Started With Edge AI, which outlines best practices and pitfalls to avoid along the way.

Scaling across multiple sites

Scaling a solution across multiple (sometimes thousands) of sites is one of the most important, yet challenging tasks associated with edge computing. Some organizations try to manually build solutions to help manage deployments, but find that the resources required to scale these solutions are not sustainable.

Other organizations try to repurpose data center tools to manage their applications at the edge, but to do this requires custom scripts and automation to adapt these solutions for new environments. These customizations become difficult to support as infrastructure footprints increase and new workloads are added.

Kubernetes-based solutions can help deploy, manage, and scale applications across multiple edge locations. These are tools specifically built for edge environments, and can come with enterprise support packages. Examples include Red Hat OpenShift, VMware Tanzu, and NVIDIA Fleet Command.

Fleet Command is purpose-built for AI. It’s turnkey, secure, and can scale to thousands of devices in minutes. Watch the Simplify AI Management at the Edge demo to learn more.

Tuning an application for edge use cases

The most important aspects of an edge computing application are flexibility and performance. Applications need to be able to operate in many different environments, and need to be portable enough that they can be easily managed across distributed locations.

In addition, organizations need applications that they can rely on. Applications need to maintain performance in sometimes extreme locations where network connectivity may be spotty, like an oil rig in the middle of the ocean.

To fulfill both of those requirements, many organizations have turned to cloud-native technology to ensure their applications have the required level of flexibility and performance. By making an application cloud-native, organizations help ensure that the application is ready for edge deployments.

To learn more, see Getting Applications Ready for Cloud-Native.

Justifying the cost of a solution

Justifying the cost of any technology comes down to understanding the cost variables and proving ROI. For an edge computing solution, there are three main cost variables:

- Infrastructure costs

- Application costs

- Management costs

Proving the ROI of a deployment will vary by use case and will be different for each organization. Generally, ROI depends a lot on the value of the AI application deployed at the edge.

Learn more about the costs associated with an edge deployment with Building an Edge Strategy: Cost Factors.

Securing edge environments

Edge computing environments have unique security considerations. That’s because they cannot rely on the castle-and-moat security architecture of a data center. For instance, physical security of data and equipment are factors that must be considered when deploying AI at the edge. Additionally, if there are connections from edge devices back to an organization’s central network, ensuring encrypted traffic between the two devices is essential.

The best approach is to find solutions that offer layered security from cloud-to-edge, providing several security protocols to ensure intellectual property and critical data are always protected.

To learn more about how to secure edge environments, see Edge Computing: Considerations for Security Architects.

Do you plan to deploy containerized applications at the edge?

Cloud-native technology was discussed in the Edge Computing 201 webinar as a way to ensure applications deployed at the edge are flexible and have a reliable level of performance. 54% of respondents reported that they plan on deploying containerized applications at the edge, while 38% said they were unsure.

Organizations need flexible applications at the edge because the edge locations they are deploying to might have varying requirements. For instance, not all grocery stores are the same size. Some bigger grocery stores might have high power requirements with over a dozen cameras deployed, while a smaller grocery store might have extremely limited power requirements with just one or two cameras deployed.

Despite the differences, an organization needs to be able to deploy the same application across both of these environments with confidence that the application can easily adapt.

Cloud-native technology allows for this flexibility, while providing key reliability: applications are re-spun if there are issues, and workloads are migrated if a system fails.

Learn more about how cloud-native technology can be used in edge environments with The Future of Edge AI Is Cloud Native.

Have you considered how you will manage applications and systems at the edge?

When asked if they have considered how they will manage applications and systems at the edge, 52% of respondents reported they are building their own solution, while 24% are buying a solution from a partner. 24% reported they have not considered a management solution.

For AI at the edge, a management solution is a critical tool. The scale and distance of locations makes manually managing all of them very difficult for production deployments. Even managing a small handful of locations becomes more tedious than it needs to be when an application requires an update or new security patch.

The section of this post entitled ‘Scaling across multiple sites’ (above) outlines why manual solutions are difficult to scale. They are often useful for POCs or experimental deployments, but for any production environment, a management tool will save many headaches.

NVIDIA Fleet Command is a managed platform for container orchestration that streamlines provisioning and deployment of systems and AI applications at the edge. It simplifies the management of distributed computing environments with the scale and resiliency of the cloud, turning every site into a secure, intelligent location.

To learn more about how Fleet Command can help manage edge deployments, watch the Simplify AI Management at the Edge demo.

Looking ahead

Edge computing is a new yet proven concept for particular use cases. Understanding the basics of this technology can help many organizations accelerate workloads to drive their bottom line.

While the Edge Computing 101 and Edge Computing 201 webinar sessions focused on designing and building edge solutions, Edge Computing 301: Maintain and Optimizing Deployments dives into what you need for ongoing day-to-day management of edge deployments. Sign up to continue your edge computing learning journey.